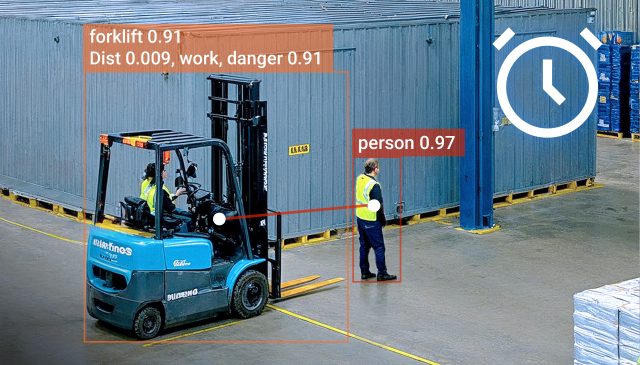

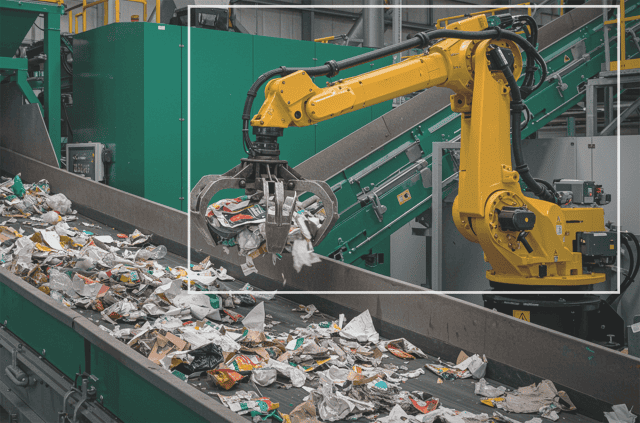

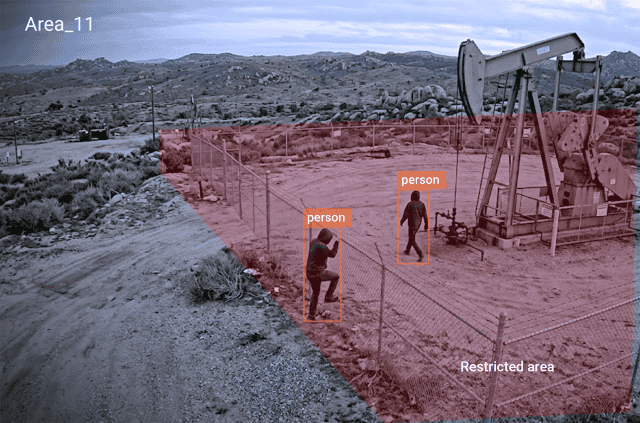

Many industrial teams today can picture their first computer vision use case intuitively. A crossing point with too many close calls. PPE gaps on a busy line. Defects can slip through at the end of a shift. A restricted area that only gets checked when someone happens to be nearby. The harder part comes after that.

The key to success is knowing how to scale computer vision systems in a way that keeps creating compounding value across safety, operations, quality, and compliance.

Answering that question sits front and center for many deployments. Many often gain momentum quickly. Others may appear to stall after an early win. The deciding factor is whether the organization can turn detection into action, action into learning, and learning into the next use case. This is the shift we see from vision to foresight.

The teams that do this well build computer vision as an operating capability. One use case proves a response loop. That loop expands across more cameras, workflows, and sites. The strongest programmes layer further, additional use cases on the same platform, building ever-increasing value over time instead.

The real prize is using visual signals to improve how work runs every day, whether that means a more consistent approach to a safer workplace, smart PPE monitoring, or stronger decision-making about what safety leaders want to know in practice.

This was the theme of the first session in our In Plain Sight webinar series, a masterclass built for operations, safety, and industrial leaders who want practical guidance on what works in real deployments. Each session is structured around one key insight, a misconception that can trip teams up, and one clear takeaway.

You can catch up on the on-demand session here or on your platform of choice, be it Spotify, Apple Music, or YouTube.

Scaling AI Vision starts with the operating model, not the pilot

It can be tempting to think that scaling starts later, once an initial deployment has proved its worth. In reality, it should start at the outset. Even a single use case at a single site means accounting for multiple cameras, varied angles, different lighting, and changing shift patterns. What is needed is a response process that works consistently enough for people to trust it. The next step often follows more quickly: adding another zone, line, and location.

That is why a first use case should be assessed against whether it creates operational visibility that the business can use. Are alerts routed to the right people? Do supervisors know what to do next? Is there an owner for calibration, tuning, and drift? Is the organization learning something useful about risk, quality, or throughput over time?

A first deployment could be described as a proof of concept. In practice, it is more useful to think of it as the first draft of a new operating model.

Key takeaway:

The first use case sets the pattern up front and lays the groundwork for how you later scale the computer vision technology.

Scaling computer vision: new AI Vision use cases, new workflow

The second use case can be where teams discover that early success doesn’t necessarily mean a copy-and-paste approach can be applied.

On paper, adding a second use case after the first can look simple. Add another model, point it at another problem, and keep going. In practice, a seamless ‘rinse and repeat’ approach is unrealistic. Each new use case brings a different workflow, owner, and definition of success. PPE may sit with safety, while SOP compliance will matter most to operations. Defect detection may be led by quality teams. Whilst the same cameras can support all three, the urgency, thresholds, escalation paths, and reporting logic are not the same.

This is where planning to layer additional use cases becomes powerful and paves the way for successful scaling. A site that starts with near-miss detection can later build on the same foundation with PPE detection, operator readiness detection, or material flow route compliance. The aim is to widen the return from using infrastructure that is already in place.

A dangerous trap can be assuming that success makes the next step automatic. However, each new use case needs to be planned for and designed around the business response it will trigger.

Key takeaway:

Scaling works best when each use case is treated as a new operational decision loop, and lessons learned from each deployment are applied to the next.

From detection to decisions when scaling computer vision

Many organizations still underestimate how much of the work begins after the model proves it can see something.

Detection is just one layer. Useful deployment means secure access to video streams, reliable handling of live feeds, zone configuration, and threshold tuning. It needs to encompass schedules, remote updates, system health monitoring, and workflows that survive real-world change. Operational conditions aren’t static. Layouts shift. Cameras move. Lighting changes.

This is why platform choices matter. Teams that want to scale computer vision across sites cannot rely on ad-hoc, site-by-site fixes or multiple local workarounds. They need central standards, remote maintainability, and enough local flexibility to reflect what is happening on the ground. That is where broader thinking about computer vision in manufacturing, for example, starts to become more relevant than the first isolated use case.

Some teams may think that detection is deployment. We believe there’s an essential distinction to be made here. Detection shows what is possible. Deployment proves that it can be used, maintained, governed, and trusted.

Key takeaway:

To be ready to successfully scale, computer vision needs to be comprehensively integrated into live operations.

Scaling AI Vision without letting ownership blur

The first deployment goes live. People see value. Interest grows. Then momentum may slow. It’s a pattern we see on some deployments. That often happens for a simple reason: no one is fully clear on who owns what, and what happens next.

Who owns the programme and each site rollout? Who decides thresholds and responds to urgent alerts? Who reviews trend data and signs off on the next use case? If those answers are vague, the technology may still perform while the programme itself starts to drift.

There can be an assumption that once value is proven, expansion happens naturally and organically. In reality, scale depends on whether the organization has moved beyond enthusiasm and created a workable governance model.

That can be relatively ‘lightweight’ and most definitely does not mean bureaucracy. It means clear decision rights, visible ownership, and a light but regular rhythm for review. Without that, the second and third use cases expose any organizational weakness long before they expose technical weakness.

Key takeaway:

The biggest threat after an early win is unclear ownership and planning, and not in the technology itself.

Avoiding alert fatigue when scaling computer vision

The fastest way to weaken trust in a good system is to make everything feel urgent.

Some deployments learn this late in the day. More detections sound like progress, but unmanaged signal volume can create the opposite effect. If every alert interrupts work, people can start to tune out. If every use case feeds the same channel, important signals can be buried in noise. ‘Alert fatigue’ can weaken trust in the deployment.

This is where response tiers matter. Vehicle near-miss risk may need immediate escalation in some cases, but not every moment of proximity should be treated as a crisis. PPE non-compliance often calls for coaching, trend review, and targeted follow-up, rather than constant interruption. Different use cases need different confidence thresholds, response windows, and workflows.

A programme matures when it stops treating every signal the same. Some events need intervention. Some need reporting. Some should push the business toward a process fix rather than just another alert. That is the difference between building a more useful and safer workplace, rather than simply generating more notifications. It is also why practical use cases such as smart PPE monitoring need to be designed around action, not just detection.

Key takeaway:

The goal with alerts is sharper action with less noise, and the insight to design a response around tailored action, not just detection.

Scaling AI Vision by winning trust on the floor

A rollout can be technically sound and still potentially struggle if the people closest to the work do not trust it.

Frontline teams need to understand what the system is for, how alerts will be used, and what benefit it brings. In many industrial environments, the cameras are already in place. What changes with successful computer vision deployments is the ability to interpret existing footage in a more useful and timely way.

When deployments go well, the language stays grounded. The focus is on hazard reduction, coaching, process improvement, and earlier intervention. The risk is that the technology is introduced too late to the people working with it, or else framed in a way that makes it seem like a monitoring exercise. Instead, it should be clearly explained as an improvement tool.

Trust grows when worker councils, unions, site leaders, facilities, and IT are involved early enough to shape the rollout, rather than react to it.

Key takeaway:

Teams scale faster when AI Vision is understood as a support system, and not misunderstood as being for monitoring or surveillance.

On scaling computer vision across sites

A multi-site rollout does not mean repeating the same setup everywhere.

Strong scale depends on balance. Some things need to be standardized centrally: platform choice, governance, security, reporting logic, and success metrics, for example. Other elements need local adjustment: zones, thresholds, escalation paths, lighting conditions, line layouts, and shift patterns.

When central leadership and local champions work well together, each new site becomes a controlled rollout with local tuning rather than a fresh project from zero. That is often the point where the conversation widens beyond risk reduction and toward flow, adherence, waste, and operational performance. Themes explored in Lean tend to become more relevant here, especially when programmes begin to extend into areas like material flow rate monitoring, for example.

A common mistake can be assuming organization-wide scale means identical configuration. In practice, it usually means disciplined consistency where it matters, and deliberate flexibility where it counts.

Key takeaway:

Standardize at the outset, adapt to operation-specific requirements, and remain flexible as further use cases are added.

Scaling AI Vision insights into foresight

Visibility matters, but on its own, it is not enough.

The real value comes when visual signals are used over time to show where risk is building, where drift is creeping in, and where intervention is likely to make the biggest difference. That might lead to retraining, layout changes, better maintenance timing, stronger engineering controls, or a different process decision altogether.

This is where computer vision begins to shape operational thinking. Transforming workplace safety with AI Vision is the direct result of this mindset. Safety teams can work with leading indicators rather than waiting for reported events. Quality teams can spot recurring defect patterns earlier. Operations teams can see where flow or readiness is slipping before it becomes a larger performance problem.

The business case becomes clearer at that point, too. Broader computer vision insight and the Viso Suite economic impact study point to the same underlying message: the strongest returns come when visual intelligence moves from isolated intervention to continuous improvement.

Key takeaway:

Foresight begins when AI vision informs what the business changes next, leading to continuous improvement.

Having layered computer vision use cases, scale with discipline

The organizations that scale well usually stay close to a few basics. They define response tiers before signal volume becomes a problem. They plan for both dimensions of scale: more use cases and more sites. They treat ownership, calibration, and governance as part of the operating model, not as loose ends to sort out later.

That is the practical route from vision to foresight: through one reliable loop, then another, and another. Each loop adds visibility, learning, and confidence. Each new use case strengthens the case for the next one. Each site rollout becomes easier because the organization now knows what good looks like.

Part Two of the In Plain Sight series is heading your way in April, picking up the conversation on union, industry, and wider stakeholder engagement with computer vision. For teams deciding what their next step might be, our Health and Safety Toolkit offers practical support with building the business case, planning how to layer use cases, and shaping rollout priorities. A practitioner’s guide to computer vision also provides a deeper practical grounding for those exploring or deploying computer vision.