Most major industrial organisations already have the hardware: CCTV, line cameras, mobile cameras, vehicle cams. Many teams have experimented with models. Some even run computer vision for workplace safety in production.

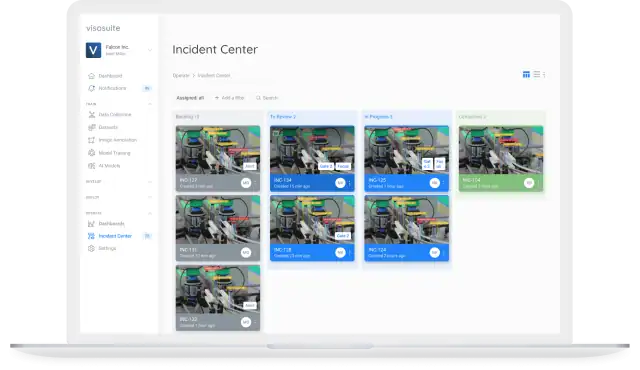

Yet familiar frustrations emerge in conversations with operations, HSE, quality, engineering, and innovation leaders. The organisation never fully managed to turn detections into decisions, which could pave the way for scaling further, and reaping the rewards: measured outcomes and ROI.

That execution gap is the reason we titled our January 2026 webinar “Vision to Decision.” In live deployments across manufacturing, logistics, food processing, and construction, the winners are not the teams with the most cameras or the fanciest demo. They are the teams that treat vision as part of the operating system: connected to daily routines, cross-functional workflows, and the decisions that protect people and performance.

Below are the first five themes we covered in the session, grounded in real implementations and patterns we’re seeing in the field. We completed the Top Ten list, with the remaining five, in Part 2 at our February 2026 webinar focused on the journey from decision to prevention.

Beyond visibility and proving causality with AI Vision

Most computer vision programmes begin with a breakthrough moment: teams suddenly see what they couldn’t see before. Waste, micro-stoppages, unsafe interactions, quality drift, queue build-ups, congestion patterns made visible in real time.

That’s valuable, but visibility alone doesn’t help a business to make the right decisions. It becomes another dashboard, another feed, another set of alerts. The returns start when vision explains why outcomes are happening and points to actions that change the process.

A strong example comes from Cargill’s beef processing operations. Yield loss can be measured in millimetres, which makes it brutally difficult to manage with periodic audits or end-of-day reporting. Cargill developed a vision system (publicly referenced as “CarVe”) deployed directly on the processing line. Every carcass is measured in real time and waste is flagged as it happens.

The step change was what the organisation did next beyond detection:

- Operators adjusting cutting technique in the moment, not at the end of a shift

- Supervisors comparing yield variation by line, shift, and operator to target coaching

- Leaders connecting losses to upstream drivers: training gaps, equipment calibration, raw material and supplier changes

Yield, becomes something you can manage during production, rather than a lagging metric.

We see the same causal approach in advanced manufacturing. BMW has talked publicly about using computer vision across inspection, paint checks, logistics movement, and digital twin initiatives. The critical detail is how they treat signals: defects at final inspection aren’t just pass/fail events.

Teams work backwards across stations and time to isolate the upstream cause, from tool wear to environmental shifts in a paint booth, and process changes made hours earlier. This provides the ability to stabilise the system before waste accumulates.

When vision makes causality visible, behaviour changes. Scrap falls because processes get tuned earlier. Rework drops because drift gets caught before it becomes a defect factory. Interventions shift upstream, cheaper, faster, and far less disruptive.

AI Vision and the safer workplace: why can incidents still happen?

Safety is often the fastest entry point for AI Vision. Creating a safer workplace with PPE detection, exclusion-zone monitoring, vehicle-pedestrian separation, and restricted-area controls are well established as part of a healthy safety culture.

But many safety and health teams notice an uncomfortable reality: even with better compliance visibility and a relatively safe work environment, serious incidents still occur. That isn’t an argument against safety vision, it is a sign that many deployments stop at the compliance layer. The path towards zero-harm runs directly through AI Vision deployment.

In construction, Skanska and other major contractors have successfully deployed vision for PPE and restricted area monitoring. Violations decline, visibility improves, safety teams get coverage across complex sites. Teams review severe incidents and find a pattern: the big events rarely come from a single, isolated violation caught on camera.

They are usually the result of risk building over time:

- Near misses that aren’t connected into a broader trend

- Congested areas that normalise unsafe workarounds

- Fatigue during high-pressure phases

- Small shortcuts that gradually become “the way we do it here”

Binary “violation → safety alert” systems don’t capture that build-up. They are reactive by design.

Contrast that with the approach taken on large infrastructure programmes such as HS2 in the UK, where teams have analysed visual data by activity type, project phase, and site layout. That changes the game because risk becomes measurable as a pattern, not a moment.

If certain tunnelling phases plus certain layouts repeatedly create congestion, and congestion repeatedly produces shortcuts, you can redesign the work before the incident happens: sequencing, supervision levels, layout changes, traffic management.

Similarly, Costain, through its involvement in the Safety Tech Accelerator, has discussed using technology not just for enforcement, but for leading indicators: signals that reliably precede harm. In one construction deployment for a client of ours, always-on vision replaced periodic inspections with continuous risk detection.

Over 12 weeks at a single high-risk drilling site, the system identified 154 safety incidents, including 49 near misses involving unsafe proximity to heavy machinery. Those insights for safety leaders drove changes in layout, supervision, and work planning, with an estimated $300,000 in incident-related cost savings per site.

The operational lesson is simple: prevention becomes practical and blind spots are visible when safety teams stop chasing incidents and start managing risk trajectories. When you can see risk rising in a specific area, during a specific activity, with a specific contractor mix, you can put resources where they matter most, without adding bureaucracy or slowing the job down.

When AI Vision is deployed, defects are process-based, not frame-based

Automated inspection keeps getting better. Many teams now detect defects faster and more consistently than human inspection ever could. And still: scrap spikes, rework waves, line issues, and occasional recalls continue to surprise organisations.

That’s because most defects are not born in a single frame of video. They’re the end result of a process drifting out of control, gradually enough that people and basic sensors don’t spot it until it’s too late.

A good illustration comes from Coca-Cola bottling lines, where computer vision has been deployed for real-time checks: label alignment, cap issues, fill levels, contamination.

Once quality teams began correlating visual signals across stations and time windows, they started seeing the early drift: fill levels creeping, cap alignment varying, label tension shifting. Each change might remain within tolerance, until the system crosses a threshold and defects cascade.

When drift becomes visible early, teams can intervene:

- Adjusting settings before scrap accumulates

- Triggering maintenance before downtime hits

- Switching material batches when results vary

- Coaching operators with evidence, not guesswork

This pattern shows up in food and beverage too. Tyson Foods has discussed using vision in poultry plants for defect detection, foreign object checks, and yield improvement. The bigger gains come when plants trend signals across runs and link yield loss to equipment condition, shift transitions, or raw-material batches. With that context, interventions happen while the line is still stable.

In Lean terms, vision becomes a way to protect flow. It makes abnormal conditions visible sooner, and it makes root cause analysis faster because you’re not reconstructing the story after the fact.

AI Vision deployed: ROI comes from multi-site learning

Many organisations can generate a strong business case at one site. The larger returns arrive when learning spreads across the network.

The most effective multi-site programmes do three things well:

1. They standardise best practice with visual proof

A best practice written in a SOP is easy to misinterpret. A best practice shown in visual evidence is much harder to misunderstand.

2. They solve problems once

When one site discovers the drivers of a defect, a safety risk, or a yield loss, other sites shouldn’t have to rediscover it months later.

3. They close the loop with network-level learning

Seeing an issue is not the same as preventing it. The best programmes feed outcomes back into the system so models, thresholds, and playbooks improve across every site. That turns isolated wins into compounding performance gains.

In one global food and beverage example we’ve seen, plants across continents share the visual evidence of what reduces packaging defects. These range from specific setup sequences to operator actions and environmental conditions. A site in one country can establish a more stable setup, and the insight is available quickly to teams elsewhere. When a quality issue emerges in one region, other plants can check their own visual signals for the same early pattern before it becomes a full-scale problem.

This is where returns compound:

- Best practices spread in days instead of quarters

- Poorer performing sites catch up faster because the benchmark is observable

- New sites ramp quicker because they start with proven patterns

- Standardisation improves without heavy central control

- Layering further use cases across sites becomes more easily achievable

You also see the same dynamic in supply chain and logistics. When a distribution centre optimises loading patterns or routing rules using vision insights, that learning can be tested and deployed across the network, multiplying value beyond the original site.

The difference is not just cultural, it’s architectural. Programmes that compound ROI usually treat vision as a platform capability: shared infrastructure, repeatable deployment patterns, and a central place where insights are captured and reused across use cases.

AI Vision deployed for a safer workplace in Construction

Construction has often been excluded from operational intelligence conversations because sites are temporary, work is dynamic, and conditions change daily.

That assumption continues to break down quickly.

Leading construction firms are using vision for safety risk prediction, equipment movement monitoring, logistics coordination, and progress tracking. Even when every project is unique, patterns emerge when you analyse visual data across projects: which phases generate the most risk, which layouts create congestion, which activities reliably precede incidents, which sequences protect productivity.

VINCI Group is one real world example of a major contractor applying this approach across projects. The key is “to surface leading indicators while work is live.”

That could mean identifying:

- Unsafe proximity between people and machines in blind spots

- Entry into exclusion zones

- Work under suspended loads

- PPE non-compliance before high-risk work begins

- Housekeeping hazards before they cause trips, delays, or downstream rework

Construction also benefits from something the sector has struggled with for decades: knowledge retention. When a site closes, a huge amount of experience walks away with a temporary workforce. Visual data allows a new site manager to start with evidence of what worked (and what didn’t) on similar projects, rather than relying on partial memory or informal handovers.

The result is a practical shift: safer sites, more predictable schedules, and measurable learning that carries forward.

The common thread with AI Vision deployed: execution is the bottleneck

Across these five themes, the message is consistent.

The AI models are rarely the limiting factor. Cameras are affordable. Edge and cloud infrastructure is mature. Integration is achievable.

The hard work is operational:

- Picking use cases that tie directly to business pain

- Designing workflows so insights change behaviour

- Scaling without multiplying complexity

- Preventing vision from becoming “just another dashboard”

- Building a learning loop where performance improves week after week

- Paving the way for additional use cases to be layered, unlocking greater ROI

Part 2 of the webinar on 18th February covered the remaining themes, namely:

- Robotics coordination

- Inventory truth

- Predictive maintenance context

- Making Lean visible with vision, and

- Building a decision system that turns visual signals into consistent outcomes

Our key takeaway?

Starting small, proving value fast, and scaling what works.

The most reliable path to ROI is choosing one or two high-frequency problems where vision adds clear, actionable signal, then using those early wins to build momentum. Once the process is working end to end, you can expand across shifts, lines, and sites so learning compounds and returns grow with every deployment.