In the years to come, Natural Language Processing (NLP) will be an essential technology for organizations across most industries. NLP is based on AI technology that understands text or voice data and responds with text or speech of its own. In this article, we break down the basics of NLP and how it will automate processes and change customer interaction as we know it.

NLP has been used for many years in customer service chatbots, and it is becoming more and more popular for use in other areas such as marketing, finance, human resources, healthcare, and media. Especially the release of ChatGPT, a language model developed by OpenAI, has led to a surge of interest in NLP. With millions of users and companies across industries starting to use ChatGPT to generate text with AI, this has been a major milestone for the commercialization of NLP.

What is NLP?

Natural language processing (NLP) is a field of computer science and artificial intelligence concerned with the interactions between computers and human languages, in particular, how to program machines to understand natural language and extract information from it.

NLP has become an important part of many applications, such as search engines, text mining, machine translation, dialogue systems, and sentiment analysis.

NLP techniques are used in many different ways. For example, it can be used to help computers understand the meaning of a text by extracting important concepts and relations between them. NLP technology can also be used to generate new text from a given input, such as creating summaries or translations. In addition, it can be used to recognize patterns in data, such as identifying names or locations.

What is Computational Linguistics?

Computational linguistics is a field of computer science and linguistics that specializes in the analysis of NLP, the process by which computers can understand human language. Hence, computational linguistics includes NLP research and covers areas such as sentence understanding, automatic question answering, syntactic parsing and tagging, dialogue agents, and text modeling.

Definition of Natural Language

Natural language is the way humans communicate with each other. Human language consists of words and phrases that we use in everyday conversation, and it can be used to talk about anything under the sun. In the context of NLP, natural language is the data that computers are trying to understand. This data can be in the form of text or speech, and it can be in any language.

What is Processing in NLP?

Processing is the act of taking this data and making sense of it. This can be done in several ways, but the goal is always the same: to extract meaning from the data and turn it into something that can be used by a computer. Later in this article, we will discuss different methods of NLP processing.

Why is NLP Important?

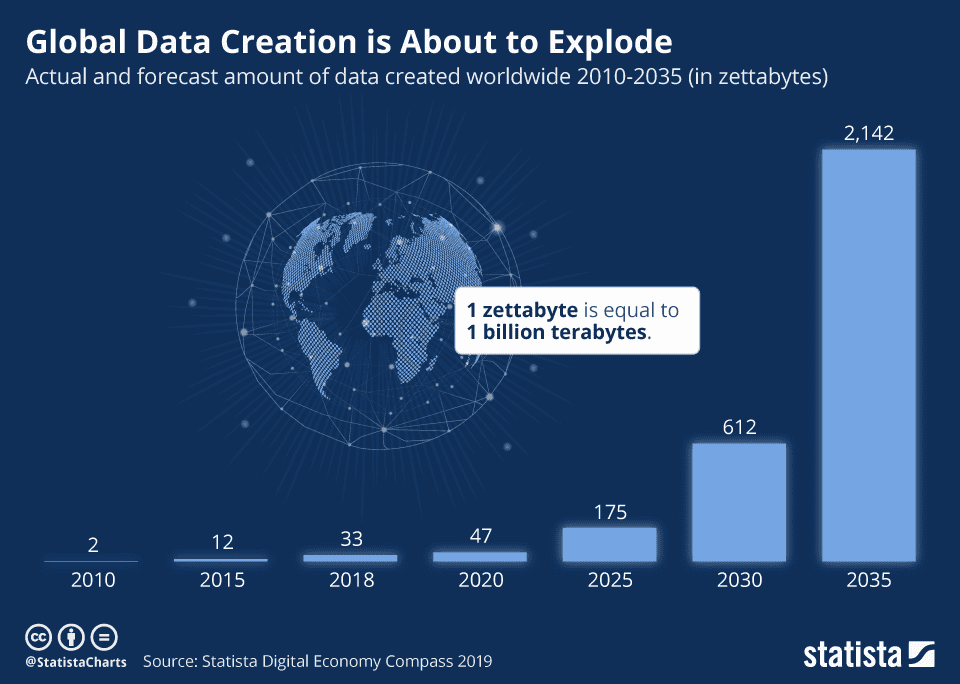

NLP is important because it helps computer systems understand human language and respond in a way that is natural to humans. Also, business processes generate enormous amounts of unstructured or semi-structured data with complex text information that requires methods for efficient processing. A rapidly growing amount of data is being created by humans, for example, through online media or text documents, which is natural language data.

Businesses could no longer analyze and process the enormous amount of information with manual operators. Because the amount of data is exponentially increasing, AI technology is needed to make sense of immense amounts of data. Therefore, NLP algorithms are used in a variety of applications, such as voice recognition, machine translation, and text analytics.

Does ChatGPT Use NLP?

Yes, ChatGPT is a language model developed by OpenAI and is primarily designed to perform NLP tasks, such as language translation, text summarization, text classification, and conversational dialogue. It is trained on large amounts of text data and uses deep learning techniques to understand and generate human-like responses to natural language input.

How Does NLP Work?

NLP is a way for computers to understand text or voice data by recognizing learned patterns. In general terms, these tasks break down language data into smaller pieces called tokens (tokenization and parsing). These tokens can then be analyzed and categorized to better understand the content. For example, stemming and lemmatization algorithms are used to normalize text and prepare words for further processing in machine learning.

Subsequently, the computer can put the pieces back together to create a complete sentence or conversation. This step includes language detection and part-of-speech tagging to describe the grammatical function of a word. The underlying NLP tasks are often used in higher-level capabilities, such as text categorization.

The Main NLP Tasks

The main task is to understand and generate human language using computational models. However, there are several different sub-fields with three main tasks:

- Natural Language Understanding (NLU): This is the process of extracting meaning from text or speech. NLU involves understanding the context of a text or conversation and extracting information from it.

- Natural Language Generation (NLG): This is the process of creating new text from a given input. NLG involves taking information from a source and turning it into readable or spoken text.

- Natural Language Processing Tools: These are the software tools that enable tasks for text processing, machine translation, and sentiment analysis.

Techniques and Methods of NLP

Parsing: A natural language parser is a computer program that recognizes which words belong together as “phrases” and which ones are the subject or object of a verb. The parser decomposes text based on grammar rules. If a piece of writing cannot be correctly interpreted, there may be grammatical errors.

Morphological parsing is the process of breaking down a word into its parts. This can be done to determine the word’s root, identify affixes, or understand the word’s function in a sentence.

Syntax analysis is the process of identifying the structural relationships between the words in a sentence. This can be used to determine the parts of speech and their roles in the sentence, as well as the syntactic dependencies between them. A syntax tree is a tree structure that depicts the various syntactic categories of a sentence. It aids in the comprehension of a sentence’s syntax.

Semantic analysis, in the context of NLP, is the process of understanding the meaning of the text. This includes identifying the entities (people, places, things, etc.) and concepts mentioned in the text, as well as understanding the relationships between them. Semantic analysis is used in a variety of applications, such as question answering, chatbots, and text classification.

Pragmatic analysis is said to be one of the toughest parts of AI technology, Pragmatic analysis deals with the context of a sentence. This includes understanding the speaker’s intention, the relationship between the participants, and the cultural background of the text.

Discourse analysis is the study of how units of language are used to construct meaning above the level of the sentence. It can be used to examine texts at all levels, from individual sentences to whole books.

What are the 5 Steps of NLP?

There are five phases of NLP:

- Step 1: Lexico-structured analysis is the process of breaking down a text into its parts, such as words and their definitions.

- Step 2: Synthesis is the creation of a new text based on the components from the original text.

- Step 3: Semantic analysis is the process of understanding the meaning of a text.

- Step 4: Discourse integration is the ability to understand how different texts fit together.

- Step 5: Pragmatic analysis is the process of determining how a text should be interpreted in a particular context.

The Evolution of NLP and Future Directions

Origin and History

The history of NLP began in the 1950s, with the development of early machine translation systems. But it wasn’t until the past few decades and the introduction of machine learning methods that it has taken off.

Since then, the field has seen a great deal of progress, playing an increasingly important role in many different areas of computing.

Evolution of Human Language Processing

NLP technology has come a long way since its inception. Initially used for translating languages, it has evolved to include other tasks such as sentiment analysis, text classification, and speech recognition.

Today, NLP is used in a variety of applications, including voice recognition and synthesis, automatic translation, information retrieval, and text mining.

In mid-November 2022, OpenAI released ChatGPT, an AI chatbot that has since become a global phenomenon, with more than 30 million users and around five million visits a day (in February 2023). It has been used to write poetry, build apps, and conduct makeshift therapy sessions, and has been embraced by business leaders, news publishers, and marketing firms, among others.

While some have complained that ChatGPT gives biased or incorrect answers, and school districts have banned it, its success has also set off a feeding frenzy of investors seeking to cash in on the AI boom. It has made OpenAI one of the fastest-growing and most valuable companies in Silicon Valley.

NLP to AGI Pipeline

Despite its limitations and controversies, ChatGPT’s success has made OpenAI’s goal of creating “artificial general intelligence” more achievable and has put the company in a rare position of power in the tech industry. This has led Google to release its own AI chatbot called Bard to compete with the success of the Microsoft-backed ChatGPT.

The technology behind Bard will also be integrated into Google’s search engine to allow for complex queries to be easily answered. By doing so, Google is integrating its latest AI technologies into its search engine to translate complex information into easy-to-digest formats. The announcement comes as Microsoft prepares to launch more products using the technology behind ChatGPT.

Google’s latest NLP technology is called LaMDA, which stands for “Language Model for Dialogue Applications.” It is an advanced model developed by Google that is designed to help computers better understand and respond to human conversation. LaMDA is trained on a wide range of topics, which enables it to hold more engaging and informative conversations with people.

Outlook and Potential of NLP Technology

In the future, NLP is expected to become even more sophisticated, with the ability to understand complex human emotions and intentions with greater accuracy. With the rapid growth of data generated by humans, NLP will become increasingly important for organizations to make sense of this data and extract valuable insights. For example, processes can be automated using software to understand customer queries and provide accurate responses. Similarly, it can be used to automatically generate reports from unstructured data sources such as social media posts or customer reviews.

As tools and models continue to evolve, the development of a variety of applications across different industries is becoming more popular. For businesses, this means that NLP can be used to improve service and product quality, make better data-driven decisions, and automate routine tasks.

For individuals, NLP can be used to better understand text data and improve communication with the potential of near-real-time voice translation. Using the NLP of Google Translate, Google Assistant, or Apple’s Siri, mobile phones can already be used as personal interpreters to translate foreign languages and help break through language barriers.

NLP chatbots such as ChatGPT offer various opportunities for businesses, including in sales and marketing, content marketing, customer support, and social media, and can assist with repetitive processes to allow employees to focus on other areas throughout the company.

However, chatbots still have some challenges to overcome, such as issues with creating proper sentence structure across different languages, understanding slang, or creating compelling content. Nevertheless, it seems that chatbots are here to stay for the foreseeable future and are changing the way businesses communicate and understand their customers.

Challenges of NLP

NLP technology has come a long way in recent years, thanks to advances in artificial intelligence (AI) and machine learning. The natural human language contains numerous nuances, which makes it extremely hard for software to analyze text or perform speech recognition in a meaningful way.

Hence, there are still many challenges that need to be addressed before NLP can be said to truly understand human language. For example, systems often struggle with idiomatic expressions, sarcasm, metaphors, and other forms of non-literal language. They also tend to be biased against certain groups of people (such as women or minorities), due to the way they are trained on data sets that reflect these biases.

Advantages of NLP

- Improved communication: Through the use of large language models, NLP helps people communicate more effectively with computers and machines, making technology more accessible to everyone.

- Increased efficiency: AI-based text understanding can automate many tasks, such as language translation, information extraction, and sentiment analysis, which can save time and improve productivity.

- Better accuracy: By using algorithms to understand the nuances of language, NLP can provide more accurate results than humans, especially when it comes to analyzing large amounts of data.

- Enhanced customer experience: Chatbots can provide personalized and timely customer support, improving the overall customer experience and satisfaction.

- Insightful analysis: NLP can help organizations gain insights from unstructured data, such as customer feedback, social media posts, and online reviews, which can help them make data-driven decisions.

- Cost-effective: By automating routine tasks, the use of AI for language understanding can reduce the need for manual labor, saving organizations time and money.

Statistical NLP

Statistical NLP is the application of statistics to language problems. It uses mathematical models to account for the variability in language data with a statistical approach, which allows one to understand and predict patterns in linguistic data.

Statistical NLP is a relatively new field, and as such, there is much ongoing research into the various ways that statistical methods can be used to improve and build models.

Shallow and Deep NLP

NLP is often divided into two categories: shallow and deep. Shallow NLP focuses on the surface structures of language, such as part-of-speech tagging (grammatical tagging) and named entity recognition (recognizing information units like names, times, dates, and currencies). These are significant tasks, but they don’t get beyond the surface of language understanding.

In contrast, deep NLP tasks try to model higher-level concepts, such as sentiment analysis and topic modeling. These tasks are much more difficult, but they are also much more valuable because they can give us insights into the underlying meaning of language.

NLP Models and Machine Learning

Machine learning is important for NLP because it allows computers to learn from data and continuously improve their ability to understand text or voice data. This is important because it allows NLP applications to become more accurate over time, and thus improve the overall performance and user experience.

Deep Learning for NLP

In recent years, a range of deep learning models has been developed for NLP to improve, accelerate, and automate text analytics functions and features. Machine learning, and especially deep learning methods, have shown to be very successful in solving NLP tasks. In deep learning, multiple layers of neural networks are used to learn representations of data at increasing levels of abstraction. This allows the network to learn complex patterns in the data to improve the performance of models.

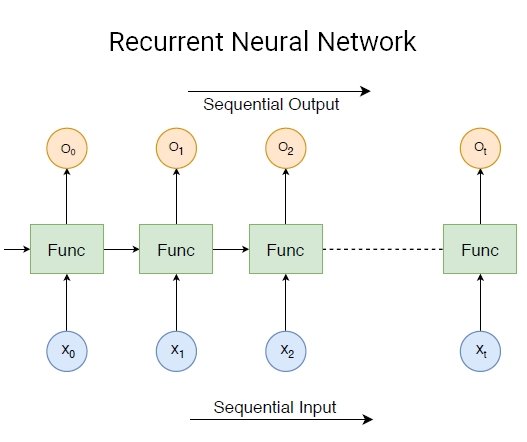

In human language, sentences are composed of words and phrases with a certain structure. Deep learning, especially Recurrent Neural Networks (RNNs), is ideal for handling and analyzing sequential data such as text, time series, financial data, speech, audio, and video, among others.

What are the Practical Applications of NLP?

There are five main steps required to use deep learning for moving from unstructured data to speech recognition:

- Step 1 – Data Collection: Gathering data from various sources, both electronic and human. Data collection is the basis of ML training.

- Step 2 – Data Preprocessing: Preparing the data for further analysis, including cleaning up and standardizing it.

- Step 3 – Feature Extraction: Identifying the important features of the data that will be used for training and testing the AI model.

- Step 4 – Model Training and Testing: Building and testing the model to see how well it can learn and generalize from the data.

- Step 5 – Deployment: Putting the model into production so it can be used by users.

NLP Applications and Use Cases

There are many different NLP use cases, some of the most popular applications include:

- Automated customer service: Can build chatbots that can handle customer queries without human intervention. This can improve efficiency and reduce costs for businesses.

- Sentiment analysis: Can analyze text data and extract information to perform emotion analysis and identify expressed opinions. This can be used for market research, to track customer satisfaction, or to monitor social media conversations.

- Text classification: Can automatically classify text data. Different forms of text analytics can be used for document management, spam detection, social media monitoring, content moderation, or intelligent recommendation systems.

- Voice recognition systems: Voice recognition, speech-to-text, and response systems are used in applications like Alexa, Siri, and Google Assistant, where users can speak to the app and it will recognize what they are saying.

- Machine translation: Machine translation is used in applications and services like Google Translate, DeepL, or Linguee, which can translate text from one language to another.

- Medical record analysis: Comprehending clinical texts such as electronic health records, physicians’ notes, medical records, discharge summaries, and test results.

- Human-Computer-Interaction: The combination of NLP with computer vision can yield very powerful results. This helps computers understand text or voice data while also allowing them to perform visual perception, to interpret and analyze images. When these two technologies are used together, the machine can not only understand what is being said but also see the world in a way that allows it to respond accordingly.

AI Chatbots With NLP

AI chatbots are computer programs designed to simulate human conversation and perform various tasks through messaging or voice interactions. Popular AI chatbots include OpenAI’s ChatGPT, Google’s Bart A.I., or Meta’s BlenderBot 3, They use artificial intelligence (AI) techniques, such as NLP, machine learning, and other technologies to understand and generate human-like responses to natural language input.

AI-based approaches to NLP enable chatbots to understand human language and generate appropriate responses. It is used by chatbots to analyze the structure and meaning of language input and use that information to identify the intent of the user and determine the appropriate response.

For example, if a user asks a chatbot for the weather forecast, the chatbot uses NLP to recognize the intent of the user’s question and retrieve the relevant information from a weather database or service. The chatbot then generates a response that provides the requested information in a human-like way.

In addition to understanding and generating responses to text input, AI chatbots can also use NLP to analyze and generate responses to voice input. This requires additional technologies such as automatic speech recognition (ASR) and text-to-speech (TTS) systems, which work together to allow the chatbot to process and respond to spoken language.

How to Use NLP in Your Workflows

Although the concept of NLP to automate the understanding of human languages like speech or text is fascinating itself, the real value behind this technology comes from the ability to apply it to practical use cases. In the following, we will list some of the most popular computer programs and services for applied NLP data analysis.

The Best NLP Software Products

Some popular NLP software tools include:

- ChatGPT: ChatGPT is a language model developed by OpenAI and backed by Microsoft. It uses deep learning to generate human-like responses to natural language inputs provided through a simple chatbot user interface. You can test ChatGPT for free.

- IBM Watson: IBM Watson can be used for NLP through its Dialogue services. Developers can use them to create chatbots and virtual assistants that can understand natural language and respond in a way that is natural for humans. Additionally, Watson also offers Natural Language Classifier services, which can be used to detect the intent of a sentence or document and classify it accordingly.

- Google Cloud Natural Language API: The Google Cloud Natural Language API can be used to extract meaning from text, including understanding sentiment, extracting entities, and synthesizing text. Using the NLP API, developers can use machine learning methods to automatically analyze text data and take specific actions.

- Amazon Comprehend: Amazon Comprehend is the NLP service of AWS that uses machine learning to uncover information in unstructured data and text. The machine learning algorithms can be used to develop AI applications that can automatically analyze text data, find insights and relationships, and return useful information.

- Azure Cognitive Services: The Azure Cognitive Services are a suite of tools provided by Microsoft for NLP development. Using these services, developers can create custom applications that can understand and respond to natural language input in multiple languages. Use cases include converting text to lifelike speech, real-time speech translation, or verification and person identification with audio analysis.

Develop NLP with Python

Natural Language Toolkit (NLTK) is a Python library that provides NLP functionality. It includes modules for tokenizing, stemming, and parsing text, as well as algorithms for machine learning, sentiment analysis, and more. NLTK is widely used in academia and industry, and it’s a great tool for getting started with an NLP assignment or project.

The Bottom Line

Natural Language Processing (NLP) is a domain of AI technology concerned with the interactions between computers and human (natural) language data. It involves both computational techniques and theories of linguistics to understand, generate, translate, analyze, and interpret natural language texts.

The field of NLP has made tremendous progress in recent years. Deep learning algorithms have been demonstrated to be very successful at addressing a wide range of NLP tasks. The advantages of NLP applications have led to numerous industry use cases in healthcare, finance, consulting, marketing, sales, and insurance.

Looking to the future, it is clear that the analysis of natural language will continue to play an important role in the development of artificial intelligence and machine learning applications. With the rapid growth of data generated by humans, it is becoming increasingly important to be able to automatically process and understand this data. NLP provides the computational tools and theoretical foundations needed to build systems that can do just that.

If you’re interested in learning more about other disruptive AI technologies, be sure to check out our articles about Computer Vision. You may be interested in other, related articles: