Facial recognition has been a hot topic for several decades. While there are different facial recognition libraries available, DeepFace has become widely popular and is used in numerous face recognition applications.

What is Deepface?

DeepFace AI is the most lightweight face recognition and facial attribute analysis library for Python. The open-sourced DeepFace library includes all leading-edge AI models for modern face recognition and automatically handles all procedures for facial recognition in the background.

While you can run DeepFace with just a few lines of code, you don’t need to acquire in-depth knowledge about all the processes behind it. You simply import the library and pass the exact image path as an input; that’s all!

If you run face recognition with DeepFace, you get access to a set of features:

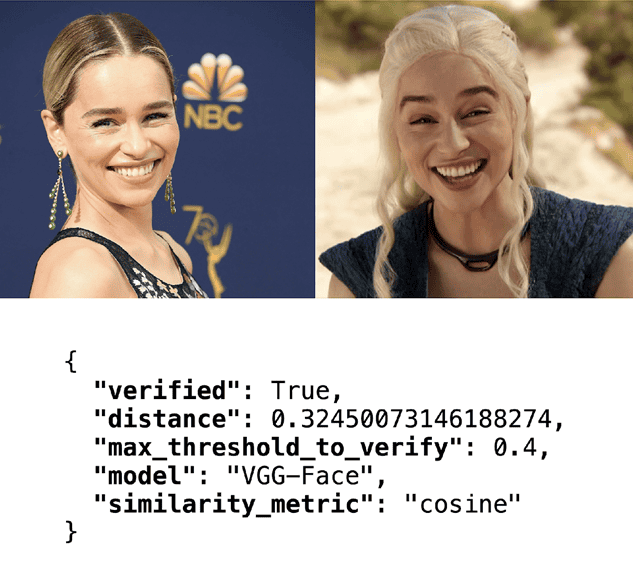

- Face Verification: The task of face verification refers to comparing a face with another to verify if it is a match or not. Hence, face verification is commonly used to compare a candidate’s face to another. This can be used to confirm that a physical face matches the one in an ID document.

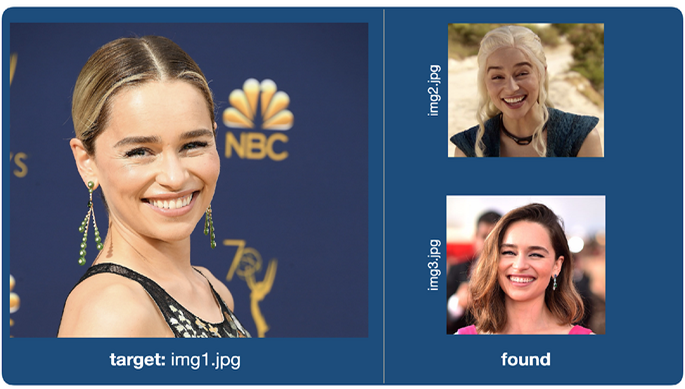

- Face Recognition: The task refers to finding a face in an image database. Performing face recognition requires running face verification many times.

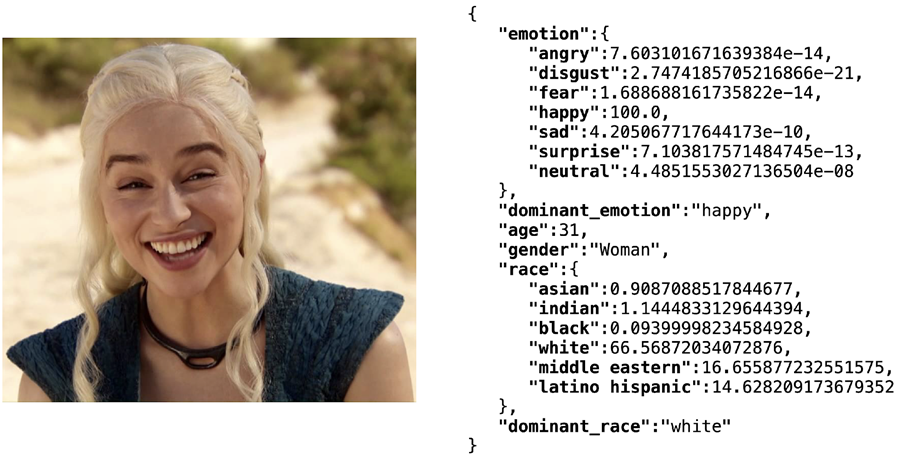

- Facial Attribute Analysis: The task of facial attribute analysis refers to describing the visual properties of face images. Accordingly, facial attributes analysis is used to extract attributes such as age, gender classification, emotion analysis, or race/ethnicity prediction.

- Real-Time Face Analysis: This feature includes testing face recognition and facial attribute analysis with the real-time video feed of your webcam.

Next, I will explain how to perform those deep face recognition tasks with DeepFace.

How to Use DeepFace

Deepface is an open-source project written in Python and licensed under the MIT License. Developers are permitted to use, modify, and distribute the library in both a private or commercial context.

The deepface library is also published in the Python Package Index (PyPI), a repository of software for the Python programming language. Next, I will guide you through a short tutorial on how to use DeepFace.

1. Install the DeepFace package

The easiest and fastest way to install the DeepFace package is to call the following command, which will install the library itself and all prerequisites from GitHub.

```shell

#Repo: https://github.com/serengil/deepface

pip install deepface

```2. Import the library

Then, you will be able to import the library and use its functionalities by using the following command.

```python

from deepface import DeepFace

```How to Perform Facial Recognition and Analysis

Run Face Verification with Deep Learning on DeepFace

The following example for face verification shows how simple it is to get it running. Actually, we only pass an input image pair, and that’s all!

```python

verification = DeepFace.verify(img1_path = "img1.jpg", img2_path = "img2.jpg")

```

Even though the visual appearance of Emilia Clarke in her daily life versus her role as Daenerys Targaryen in Game of Thrones is very different, DeepFace can verify this image pair, and the DeepFace engine returns the key “verified”: True. This means that the individual in every image is actually recognized as the same person.

How to Apply Deep Learn Face Recognition with DeepFace

In daily speech, we understand face recognition as the task of finding a face in a list of images. However, in the literature, face recognition refers to the task of determining a face pair as the same or different persons. The real face recognition functionality is missing in most of the alternative libraries.

Having said that, DeepFace also covers face recognition with its real meaning. To do so, you are expected to store your facial database images in a folder. Then, DeepFace will look for the identity of the passed image in your facial database folder.

```python

recognition = DeepFace.find(img_path = "img.jpg", db_path = “C:/facial_db")

```

How to perform Facial Attribute Analysis with DeepFace

Moreover, DeepFace comes with a strong facial attribute analysis module for age, gender, emotion, and race/ethnicity prediction. While DeepFace’s facial recognition module wraps existing state-of-the-art models, its facial attribute analysis has its own models. Currently, the age prediction model achieves a mean absolute error of +/- 4.6 years, and the gender prediction model reaches an accuracy of 97%.

You can use the following command to execute the facial attribute analysis and test it out yourself:

```python

analysis = DeepFace.analyze(img_path = "img.jpg", actions = ["age", "gender", "emotion", "race"])

print(analysis)

```According to the facial attribute analysis results below, Emilia Clarke was recognized as age of “31”, gender “woman”, emotion “happy” based on this image.

Use Face Recognition and Attribute Analysis in Real-time Videos

Furthermore, you can test both facial recognition and facial attribute analysis modules in real-time. The stream function will access your webcam and run those modules. It’s fun, isn’t it?

```python DeepFace.stream(db_path = “C:/facial_db”) ```

The Most Popular Face Recognition Models

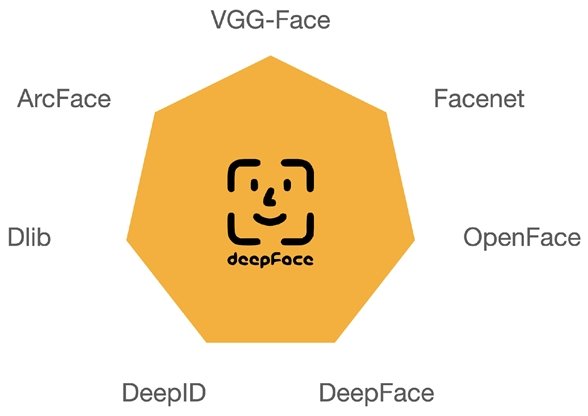

While most alternative facial recognition libraries serve a single AI model, the DeepFace library wraps many cutting-edge face recognition models. Hence, it is the easiest way to use the Facebook DeepFace algorithm and all the other top face recognition algorithms below.

The following deep learning face recognition algorithms can be used with the DeepFace library. Most of them are based on state-of-the-art Convolutional Neural Networks (CNN) and provide best-in-class results.

VGG-Face

VGG stands for Visual Geometry Group. A VGG neural network (VGGNet) is one of the most used image recognition model types based on deep convolutional neural networks. The VGG architecture became famous for achieving top results at the ImageNet challenge. The model was designed by the researchers at the University of Oxford.

While the VGG-Face has the same structure as the regular VGG model, it is tuned with facial images. The VGG face recognition model achieves a 97.78% accuracy on the popular Labeled Faces in the Wild (LFW) dataset.

How to use VGG-Face: The DeepFace library uses VGG-Face as the default model.

Google FaceNet

This model is developed by the researchers of Google. FaceNet is considered to be a state-of-the-art model for face detection and recognition with deep learning. FaceNet can be used for face recognition, verification, and clustering (Face clustering is used to cluster photos of people with the same identity).

The main benefit of FaceNet is its high efficiency and performance, it is reported to achieve 99.63% accuracy on the LFW dataset and 95.12% on the Youtube Faces DB, while using only 128-bytes per face.

How to use FaceNet: Probably the easiest way to use Google FaceNet is with the DeepFace Library, which you can install and set an argument in the DeepFace functions (see the chapter below).

OpenFace

This face recognition model is built by the researchers of Carnegie Mellon University. Hence, OpenFace is heavily inspired by the FaceNet project, but this is more lightweight, and its license type is more flexible. OpenFace achieves 93.80% accuracy on the LFW dataset.

How to use OpenFace: As with the models above, you can use the OpenFace AI model by using the DeepFace Library.

Facebook DeepFace

This face recognition model was developed by researchers at Facebook. The Facebook DeepFace algorithm was trained on a labeled dataset of four million faces belonging to over 4’000 individuals, which was the largest facial dataset at the time of release. The approach is based on a deep neural network with nine layers.

The Facebook model achieves an accuracy of 97.35% (+/- 0.25%) on the LFW dataset benchmark. The researchers claim that the DeepFace Facebook algorithm will close the gap to human-level performance (97.53%) on the same dataset. This indicates that DeepFace is sometimes more successful than human beings when performing face recognition tasks.

How to use Facebook DeepFace: An easy way to use the Facebook face recognition algorithm is by using the similarly named DeepFace Library that contains the Facebook model. Read below how to

DeepID

The DeepID face verification algorithm performs face recognition based on deep learning. It was one of the first models using convolutional neural networks and achieving better-than-human performance on face recognition tasks. Deep-ID was introduced by researchers of the Chinese University of Hong Kong.

Systems based on DeepID face recognition were some of the first to surpass human performance on the task. For example, DeepID2 achieved 99.15% on the Labeled Faces in the Wild (LFW) dataset.

How to use the DeepID model: DeepID is one of the external face recognition models wrapped in the DeepFace library.

Dlib

The Dlib face recognition model names itself “the world’s simplest facial recognition API for python”. The machine learning model is used to recognize and manipulate faces from Python or the command line. While the dlib library is originally written in C++, it has easy-to-use Python bindings.

Interestingly, the Dlib model was not designed by a research group. It is introduced by Davis E. King, the main developer of the Dlib image processing library.

Dlib’s face recognition tool maps an image of a human face to a 128-dimensional vector space, where images of identical people are near to each other, and the images of different people are far apart. Therefore, dlib performs face recognition by mapping faces to the 128d space and then checking if their Euclidean distance is small enough.

With a distance threshold of 0.6, the dlib model achieved an accuracy of 99.38% on the standard LFW face recognition benchmark, which places it among the best algorithms for face recognition.

How to use Dlib for face recognition: The model is also wrapped in the DeepFace library and can be set as an argument in the deep face functions (more about that below).

ArcFace

This is the newest model in the model portfolio. Its joint designers are the researchers of Imperial College London and InsightFace. The ArcFace model achieves 99.40% accuracy on the LFW dataset.

How to Use the Face Recognition Models

As mentioned above, experiments show that human beings achieve a 97.53% score for facial recognition on the Labeled Faces in the Wild dataset. Interestingly, VGG-Face, FaceNet, Dlib, and ArcFace have already passed that score (better-than-human-performing AI algorithms). On the other hand, OpenFace, DeepFace, and DeepID show a very close score to human performance.

To use these models, they can be set as an argument in the DeepFace functions:

```python

models = ["VGG-Face", "Facenet", "OpenFace", "DeepFace", "DeepID", "Dlib", "ArcFace"]

#face verification

verification = DeepFace.verify("img1.jpg", "img2.jpg", model_name = models[1])

#face recognition

recognition = DeepFace.find(img_path = "img.jpg", db_path = “C:/facial_db", model_name = models[1])

```

DeepFace has been expanding its model portfolio since its first commit. Its initial version wraps just VGG-Face and Facenet. It supports seven cutting-edge face recognition models. But also in the time to come, you will be able to easily use the latest face recognition models with DeepFace, because the model name is an argument of its functions, and the interface always stays the same.

Most Popular Face Detectors

Face detection and alignment are very important stages for a facial recognition pipeline. Google stated that face alignment alone increases the face recognition accuracy score by 0.76%.

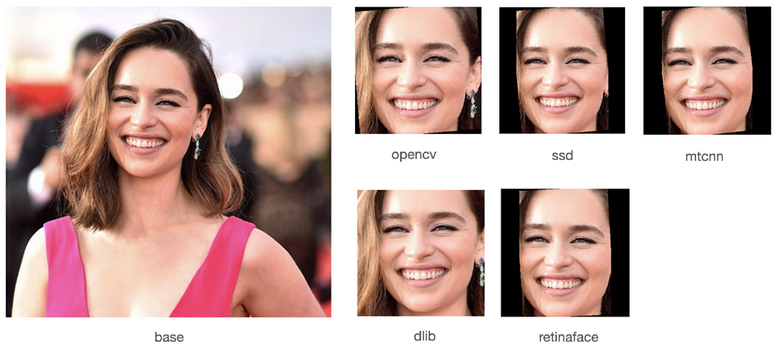

In general, DeepFace is an easy way to use the most popular state-of-the-art face detectors. Currently, multiple cutting-edge facial detectors are wrapped in DeepFace:

OpenCV

Compared to others, OpenCV is the most lightweight face detector. The popular image processing tool uses a Haar-Cascade algorithm that is not based on deep learning techniques. That’s why it is fast, but its performance is relatively low. For OpenCV to work properly, frontal images are required.

Moreover, its eye detection performance is average. This causes alignment issues. Notice that the default detector in DeepFace is OpenCV.

Dlib

This detector uses a hog algorithm in the background. Hence, similarly to OpenCV, it is not based on deep learning. Still, it has relatively high detection and alignment scores.

SSD

SSD stands for Single-Shot Detector; it is a popular deep learning-based detector. The performance of SSD is comparable to OpenCV. However, SSD does not support facial landmarks and depends on OpenCV’s eye detection module to align. Even though its detection performance is high, the alignment score is only average.

MTCNN

This is a deep learning-based face detector, and it comes with facial landmarks. That is the reason why both detection and alignment scores are high for MTCNN. However, it is slower than OpenCV, SSD, and Dlib.

RetinaFace

RetinaFace is recognized to be the state-of-the-art deep learning-based model for face detection. Its performance in the wild is challenging. However, it requires high computation power. That is why RetinaFace is the slowest face detector in comparison to the others.

How to Use Face Detectors

Similarly to the face recognition models, the detectors can also be set as an argument in the DeepFace functions:

```python

detectors = ["opencv", "ssd", "mtcnn", "dlib", "retinaface"]

#face verification

verification = DeepFace.verify("img1.jpg", "img2.jpg", detector_backend = detectors[0])

#face recognition

recognition = DeepFace.find(img_path = "img.jpg", db_path = “C:/facial_db", detector_backend = detectors[0])

```Which Face Detector Should I Use?

If your application requires high confidence, then you should consider using RetinaFace or MTCNN. On the other hand, if high speed is more important for your project, then you should use OpenCV or SSD.

How to Perform Face Extraction Tasks with DeepFace

Deepface has a custom face detection function in its interface. You can also use the library with its wide face detector portfolio only to perform face extraction. The example below shows how the face of the actor Emilia Clarke is detected and aligned. The software adds some padding to resize the extracted image to fit the expected size of the target face recognition model.

```python

detectors = ["opencv", "ssd", "mtcnn", "dlib", "retinaface"]

img = DeepFace.detectFace(“img1.jpg”, detector_backend = detectors[4])

```

Advantages of the Deepface Library

You may ask yourself why you should use the DeepFace library over alternatives. I think those are the most important reasons why people use DeepFace to build facial recognition applications:

- It is Lightweight: As a lightweight model, it is possible to use any functionality with a single line of code. You don’t need to acquire in-depth knowledge about the processes behind it.

- It’s Easy to install: Some of the popular facial recognition libraries require core C and C++ dependencies. That makes them hard to install and initialize. You might have some trouble when compiling. However, DeepFace is mainly based on TensorFlow and Keras. That makes it very easy to install.

- Multiple Models and Detectors: Currently, the DeepFace library integrates seven state-of-the-art face recognition models and five cutting-edge face detectors. The list of supported models and detectors has been expanding since its first commit and will continue to grow over the next few months.

- Open Source Face Recognition: Deepface is licensed under the MIT License. This means that you are completely free to use it for both individual and commercial purposes. Besides, it is fully open-sourced. You can customize the library based on your requirements.

- Growing Deepface Community: Moreover, the DeepFace library is highly adopted by the community. There are tens of contributors, thousands of stars on GitHub, and hundreds of thousands of installations on pip. Even if you face any issue, you will likely find the solution in the discussion forums.

- Language-Independent Package: Deepface is a language-independent package. The main functionalities of DeepFace are written in Python. It can be deployed to perform AI inference at the edge (on-device face recognition). However, it also serves an API (Deepface API), allowing it to run facial recognition and facial attribute analysis from mobile or web clients.

- Planned Features of DeepFace: While the DeepFace library supports extensive functionalities already today, the community will further benefit from new and upcoming features, such as:

- Covering new facial attribute models, such as beauty/attractiveness score prediction

- Wrapping new facial recognition models, such as CosFace or SphereFace

- working on a Cloud API

What’s Next with DeepFace?

The main idea of DeepFace is to integrate the best image recognition tools for deep face analysis in one lightweight and flexible library. Because simplicity is so important, we also call it LightFace. Anyone can adopt DeepFace in production-grade tasks with a high confidence score to use the most powerful open-source algorithms.

If you are looking for an enterprise-grade solution to deliver face recognition applications, you can use DeepFace with the Viso Suite infrastructure. Used by leading organizations worldwide, Viso Suite provides DeepFace fully integrated with everything you need to run and scale AI vision, such as zero-trust security and data privacy for AI vision.

We recommend you check out the DeepFace project on GitHub. Please, help out and support the project by starring ⭐️ its GitHub repo 🙏.

Continue reading about related topics: