YOLOX (You Only Look Once) is a high-performance object detection model belonging to the YOLO family. YOLOX brings with it an anchor-free design and decoupled head architecture to the YOLO family. These changes increased the model’s performance in object detection.

Object detection is a fundamental task in computer vision, and YOLOX plays a fair role in improving it.

Before going into YOLOX, it is important to take a look at the YOLO series, as YOLOX builds upon the previous YOLO models.

In 2015, researchers released the first YOLO model, which rapidly gained popularity for its object detection capabilities. Since its release, there have been continuous improvements and significant changes with the introduction of newer YOLO versions.

What is YOLO?

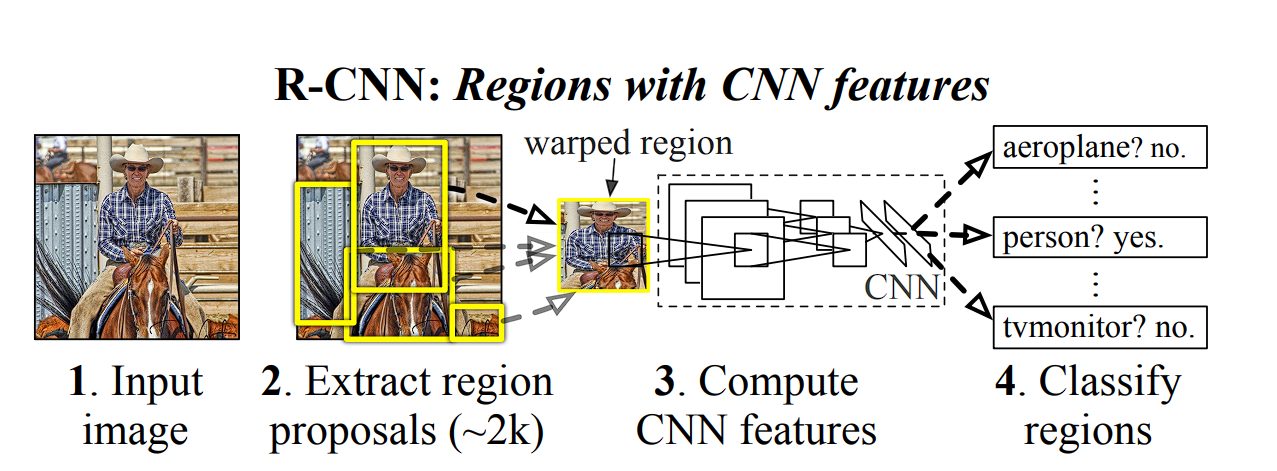

In 2015, YOLO became the first significant model capable of object detection with a single pass of the network. The previous approaches relied on Region-based Convolutional Neural Network (RCNN) and sliding window techniques.

Before YOLO, the following methods were used:

- Sliding Window Approach: The sliding window approach was one of the earliest techniques used for object detection. In this approach, a window of a fixed size moves across the image, at every step predicting whether the window contains the object of interest. This a straightforward method, but computationally expensive, as a high number of windows needs to be evaluated, especially for large images.

- Region Proposal Method (R-CNN and its variants): The Region-based Convolutional Neural Networks (R-CNN) and its successors, Fast R-CNN and Faster R-CNN, tried to reduce the computational cost of the sliding window approach by focusing on specific areas of the image that are likely to contain the object of interest. This was done by using a region proposal algorithm to generate potential bounding boxes (regions) in the image. Then, the Convolutional Neural Network (CNN) classified these regions into different object categories.

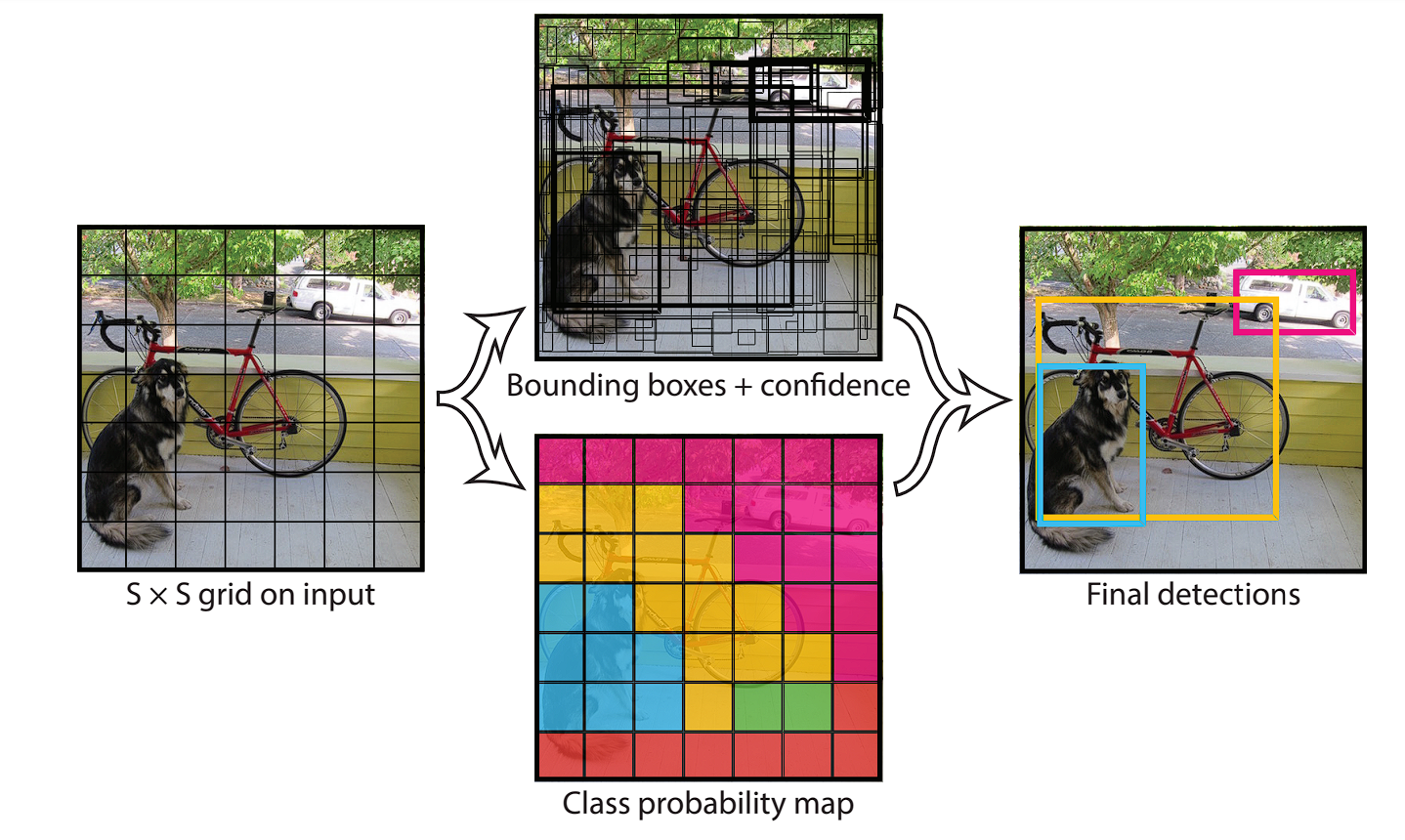

- Single Stage Method (YOLO): In the single-stage method, the detection process is simplified. This method directly predicts bounding boxes and class probabilities for objects in a single step. It does this by first extracting features using a CNN, then the image is divided into a grid of squares. For each grid cell, the model predicts a bounding box and class probabilities. This made YOLO extremely fast, and capable of real-time application.

History of YOLO

The YOLO series strives to balance speed and accuracy, delivering real-time performance without sacrificing detection quality. This is a difficult task, as an increase in speed results in lower accuracy.

For comparison, one of the best object detection models in 2015 (R-CNN Minus R) achieved a 53.5 mAP score with 6 FPS speed on the PASCAL VOC 2007 dataset. In comparison, YOLO achieved 45 FPS, along with an accuracy of 63.4 mAP.

| Real-Time Detectors | Train | mAP | FPS |

|---|---|---|---|

| 100 Hz DPM [31] | 2007 | 16.0 | 100 |

| 30 Hz DPM [31] | 2007 | 26.1 | 30 |

| Fast YOLO | 2007+2012 | 52.7 | 155 |

| YOLO | 2007+2012 | 63.4 | 45 |

| Less Than Real-Time | |||

| Fastest DPM [38] | 2007 | 30.4 | 15 |

| R-CNN Minus R [20] | 2007 | 53.5 | 6 |

| Fast R-CNN [14] | 2007+2012 | 70.0 | 0.5 |

| Faster R-CNN VGG-16 [28] | 2007+2012 | 73.2 | 7 |

| Faster R-CNN ZF [28] | 2007+2012 | 62.1 | 18 |

| YOLO VGG-16 | 2007+2012 | 66.4 | 21 |

YOLOX performance – source.

YOLO through its releases has been trying to optimize this competing objective, the reason why we have several YOLO models.

YOLOv4 and YOLOv5 introduced new network backbones, improved data augmentation techniques, and optimized training strategies. These developments led to significant gains in accuracy without drastically affecting the models’ real-time performance.

Here is a quick view of all the YOLO models along with the year of release.

2015

YOLOv1: The original YOLO model.

2016

YOLOv2/YOLO9000: Faster processing, batch normalization, anchor boxes, and multi-scale predictions.

2018

YOLOv3: Further redefined accuracy with Darknet-53 architecture.

2020

YOLOv4: Improved accuracy and speed over its predecessor with CSPDarknet53 as its backbone.

YOLOv5: Lightweight and efficient object detection model with improved performance and smaller model sizes.

2021

YOLOX: Achor-free detector

2022

YOLOv6: Faster and more efficient real-time object detection.

YOLOv7: Advanced model scaling and improved backbone design.

2023

YOLOv8: Redesigned architecture with dynamic anchor-free detection.

2024

YOLOv9: Transformer-based feature extraction and multi-scale detection.

YOLOv10: Quantization-aware training and hardware-friendly design for edge AI applications.

YOLO11: Hybrid CNN-transformer models.

What is YOLOX?

YOLOX, with its anchor-free design, drastically reduced the model complexity compared to previous YOLO versions.

How Does YOLOX Work?

The YOLO algorithm works by predicting three different features:

- Grid Division: YOLO divides the input image into a grid of cells.

- Bounding Box Prediction and Class Probabilities: For each grid cell, YOLO predicts multiple bounding boxes and their corresponding confidence scores.

- Final Prediction: The model, using the probabilities calculated in the previous steps, predicts what the object is.

YOLOX architecture is divided into three parts:

- Backbone: Extracts features from the input image.

- Neck: Aggregates multi-scale features from the backbone.

- Head: Uses extracted features to perform classification.

What is a Backbone?

Backbone in YOLOX is a pre-trained CNN that is trained on a massive dataset of images, to recognize low-level features and patterns. You can download a backbone and use it for your projects, without the need to train it again. YOLOX popularly uses the Darknet53 and Modified CSP v5 backbones.

| Type | Filters | Size | Output | |

|---|---|---|---|---|

| Convolutional | 32 | 3 × 3 | 256 × 256 | |

| Convolutional | 64 | 3 × 3 / 2 | 128 × 128 | |

| 1× | Convolutional | 32 | 1 × 1 | 128 × 128 |

| Convolutional | 64 | 3 × 3 | ||

| Residual | ||||

| Convolutional | 128 | 3 × 3 / 2 | 64 × 64 | |

| 2× | Convolutional | 64 | 1 × 1 | 64 × 64 |

| Convolutional | 128 | 3 × 3 | ||

| Residual | ||||

| Convolutional | 256 | 3 × 3 / 2 | 32 × 32 | |

| 8× | Convolutional | 128 | 1 × 1 | 32 × 32 |

| Convolutional | 256 | 3 × 3 | ||

| Residual | ||||

| Convolutional | 512 | 3 × 3 / 2 | 16 × 16 | |

| 8× | Convolutional | 256 | 1 × 1 | 16 × 16 |

| Convolutional | 512 | 3 × 3 | ||

| Residual | ||||

| Convolutional | 1024 | 3 × 3 / 2 | 8 × 8 | |

| 4× | Convolutional | 512 | 1 × 1 | 8 × 8 |

| Convolutional | 1024 | 3 × 3 | ||

| Residual | ||||

| Avgpool | Global | |||

| Connected | 1000 | |||

| Softmax | ||||

Darknet architecture – source.

What is a Neck?

The concept of a “Neck” wasn’t present in the initial versions of the YOLO series (until YOLOv4). The YOLO architecture traditionally consisted of a backbone for feature extraction and a head for detection (bounding box prediction and class probabilities).

The neck module combines feature maps extracted by the backbone network to improve detection performance, allowing the model to learn from a wider range of scales.

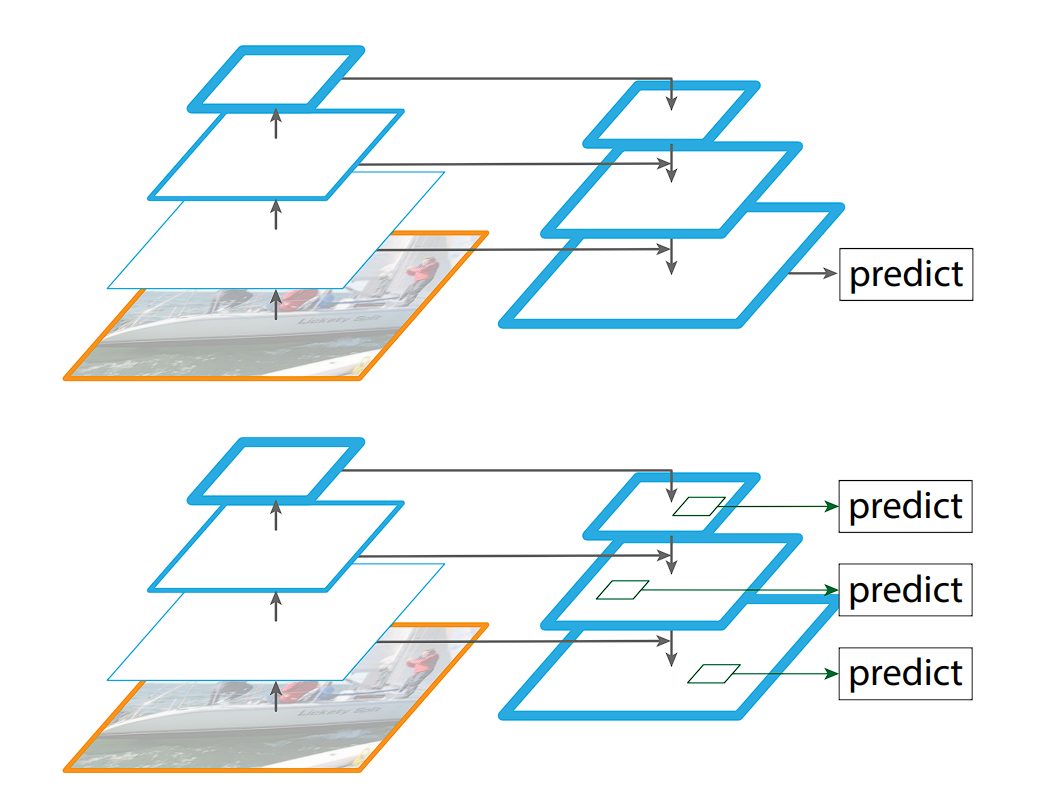

The Feature Pyramid Networks (FPN), introduced in YOLOv3, tackles object detection at various scales with a clever approach. It builds a pyramid of features, where each level captures semantic information at a different size. To achieve this, the FPN leverages a CNN that already extracts features at multiple scales. It then employs a top-down strategy: higher-resolution features from earlier layers are up-sampled and fused with lower-resolution features from deeper layers.

This creates a rich feature representation that caters to objects of different sizes within the image.

What is a Head?

The head is the final component of an object detector; it is responsible for making predictions based on the features provided by the backbone and neck. It typically consists of one or more task-specific subnetworks that perform classification, localization, instance segmentation, and pose estimation tasks.

In the end, a post-processing step, such as Non-maximum Suppression (NMS), filters out overlapping predictions and retains only the most confident detections.

YOLOX Architecture

Now that we have had an overview of YOLO models, we will look at the distinguishing features of YOLOX.

YOLOX’s creators chose YOLOv3 as a foundation because YOLOv4 and YOLOv5 pipelines relied too heavily on anchors for object detection.

The following are the features and improvements YOLOX made in comparison to previous models:

- Anchor-Free Design

- Multi positives

- Decoupled Head

- simOTA Label Assignment Strategy

- Advanced Data Augmentations

| Methods | AP (%) | Parameters | GFLOPs | Latency | FPS |

|---|---|---|---|---|---|

| YOLOv3-ultralytics2 | 44.3 | 63.00 M | 157.3 | 10.5 ms | 95.2 |

| YOLOv3 baseline | 38.5 | 63.00 M | 157.3 | 10.5 ms | 95.2 |

| +decoupled head | 39.6 (+1.1) | 63.86 M | 186.0 | 11.6 ms | 86.2 |

| +strong augmentation | 42.0 (+2.4) | 63.86 M | 186.0 | 11.6 ms | 86.2 |

| +anchor-free | 42.9 (+0.9) | 63.72 M | 185.3 | 11.1 ms | 90.1 |

| +multi positives | 45.0 (+2.1) | 63.72 M | 185.3 | 11.1 ms | 90.1 |

| +simOTA | 47.3 (+2.3) | 63.72 M | 185.3 | 11.1 ms | 90.1 |

| +NMSfree (optional) | 46.5 (-0.8) | 67.27 M | 205.1 | 13.5 ms | 74.1 |

YOLOX performance – source.

Anchor-Free Design

Unlike previous YOLO versions that relied on predefined anchors (reference boxes for bounding box prediction), YOLOX takes an anchor-free approach. This eliminates the need for hand-crafted anchors and allows the model to predict bounding boxes directly.

This approach offers advantages like:

- Flexibility: Handles objects of various shapes and sizes better.

- Efficiency: Reduces the number of predictions needed, improving processing speed.

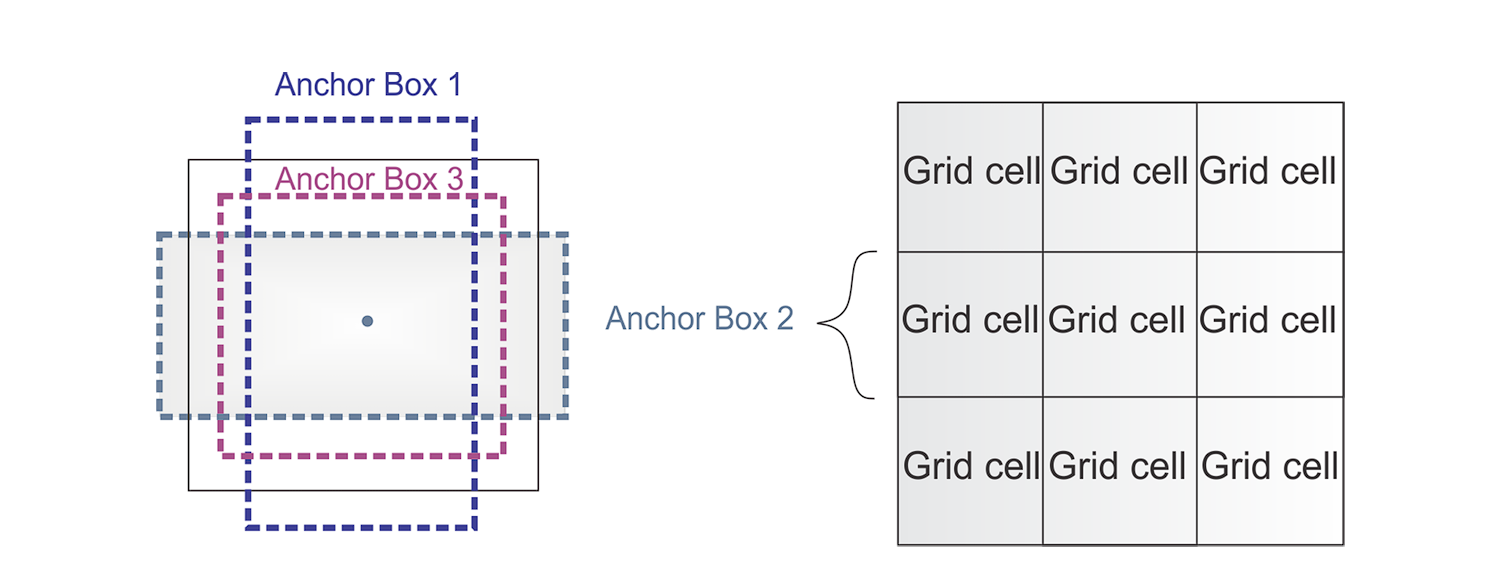

What is an Anchor?

To predict real object boundaries in images, object detection models utilize predefined bounding boxes called anchors. These anchors serve as references and are designed based on the common aspect ratios and sizes of objects found within a specific dataset.

During the training process, the model learns to use these anchors and adjust them accordingly to fit the actual objects. Instead of predicting boxes from scratch, using anchors results in fewer calculations performed.

In 2016, YOLOv2 introduced anchors, which became widely used until the emergence of YOLOX and its popularization of anchorless design. These predefined boxes served as a helpful starting point for YOLOv2, allowing it to predict bounding boxes with fewer parameters compared to learning everything from scratch. This resulted in a more efficient model. However, anchors also presented some challenges.

The anchor boxes require a lot of hyperparameters and design tweaks. For example,

- Number of anchors

- Size of the anchors

- The aspect ratio of the boxes

- A large number of anchor boxes to capture all the different sizes of objects

YOLOX improved the architecture by retiring anchors, but to compensate for the lack of anchors, YOLOX utilized a center sampling technique.

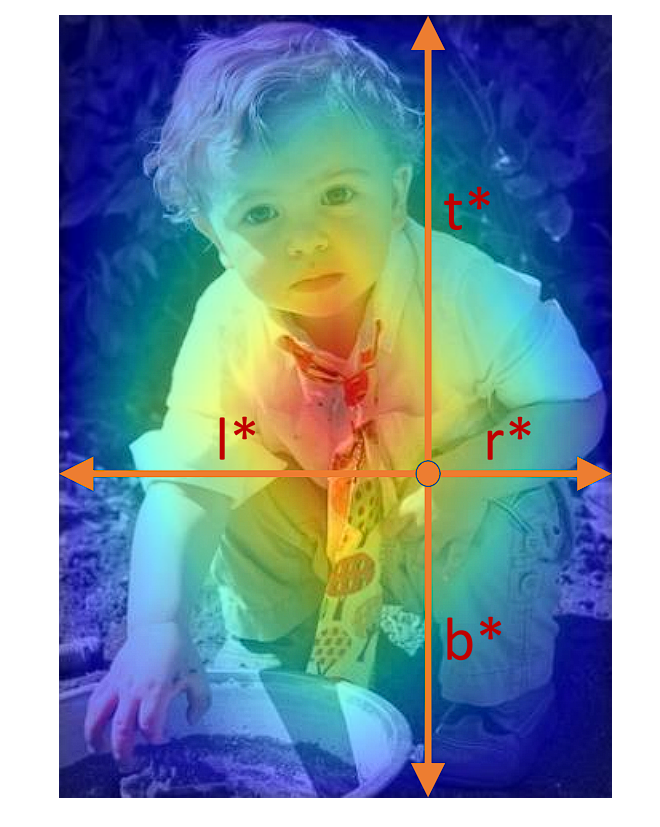

Multi Positives

During the training of the object detector, the model considers a bounding box positive based on its Intersection over Union (IoU) with the ground-truth box. This method can include samples not centered on the object, degrading model performance.

Center sampling is a technique aimed at enhancing the selection of positive samples. It focuses on the spatial relationship between the centers of candidate and ground-truth boxes. In this method, positives are selected only if the positive sample’s center falls within a defined central region of the ground-truth box (bounding of the correct image). In the case of YOLOX, it is a 3 x 3 box.

This approach ensures better alignment and centering on objects, leading to more discriminative feature learning, reduced background noise influence, and improved detection accuracy.

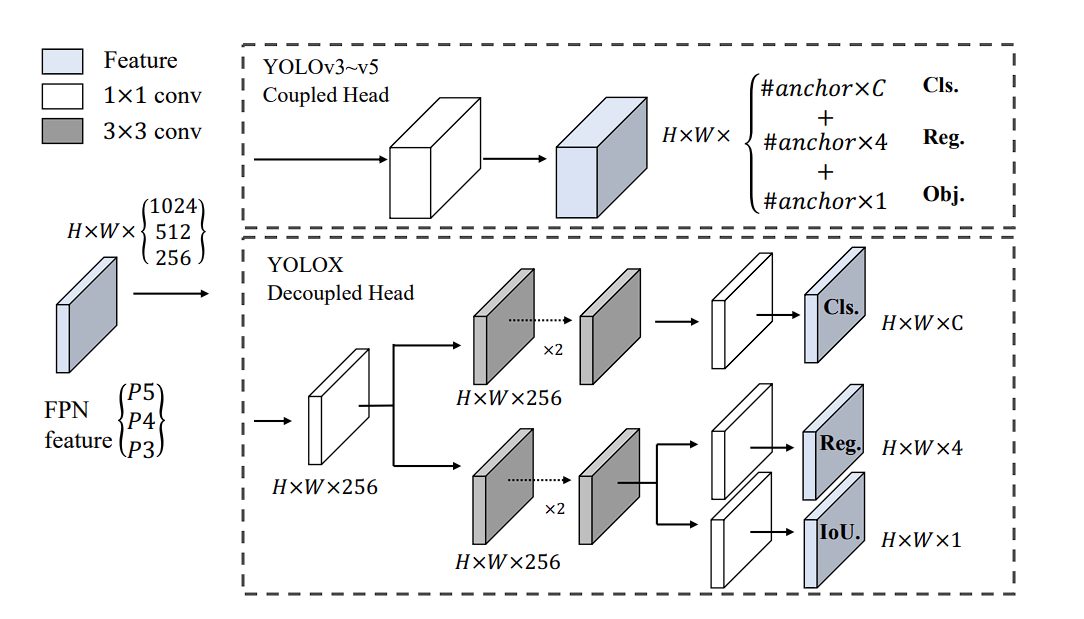

What is a Decoupled Head?

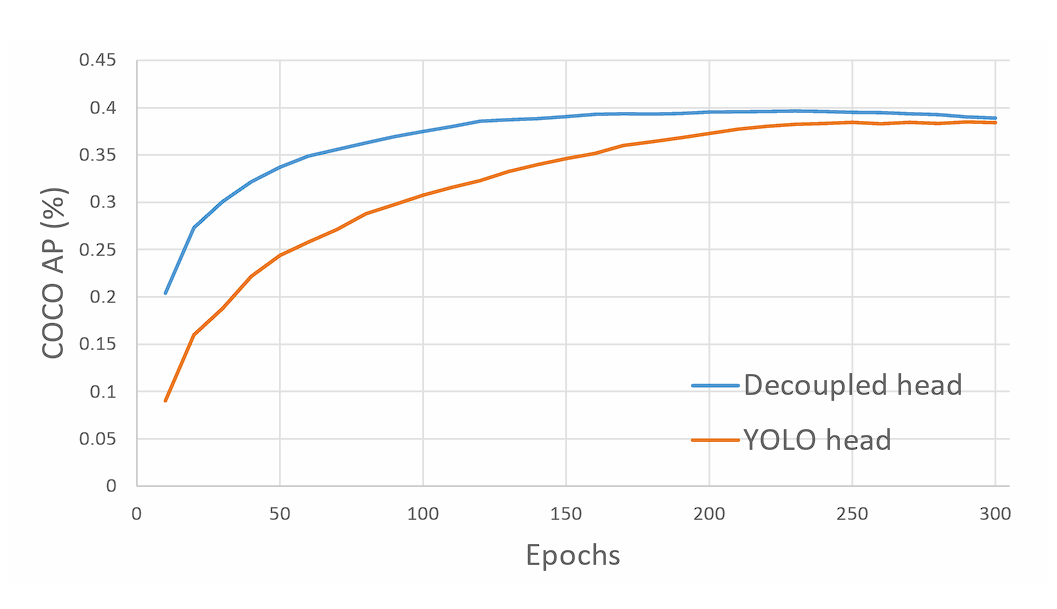

YOLOX utilizes a decoupled head, a significant departure from the single-head design in the previous YOLO models.

In traditional YOLO models, the head predicts object classes and bounding box coordinates using the same set of features. This approach simplified the architecture back in 2015, but it had a drawback. It can lead to suboptimal performance, since classification and localization of the object was performed using the same set of extracted features, and thus leads to conflict. Therefore, YOLOX introduced a decoupled head.

The decoupled head consists of two separate branches:

- Classification Branch: Focuses on predicting the class probabilities for each object in the image.

- Regression Branch: Concentrates on predicting the bounding box coordinates and dimensions for the detected objects.

This separation allows the model to specialize in each task, leading to more accurate predictions for both classification and bounding box regression. Moreover, doing so leads to faster model convergence.

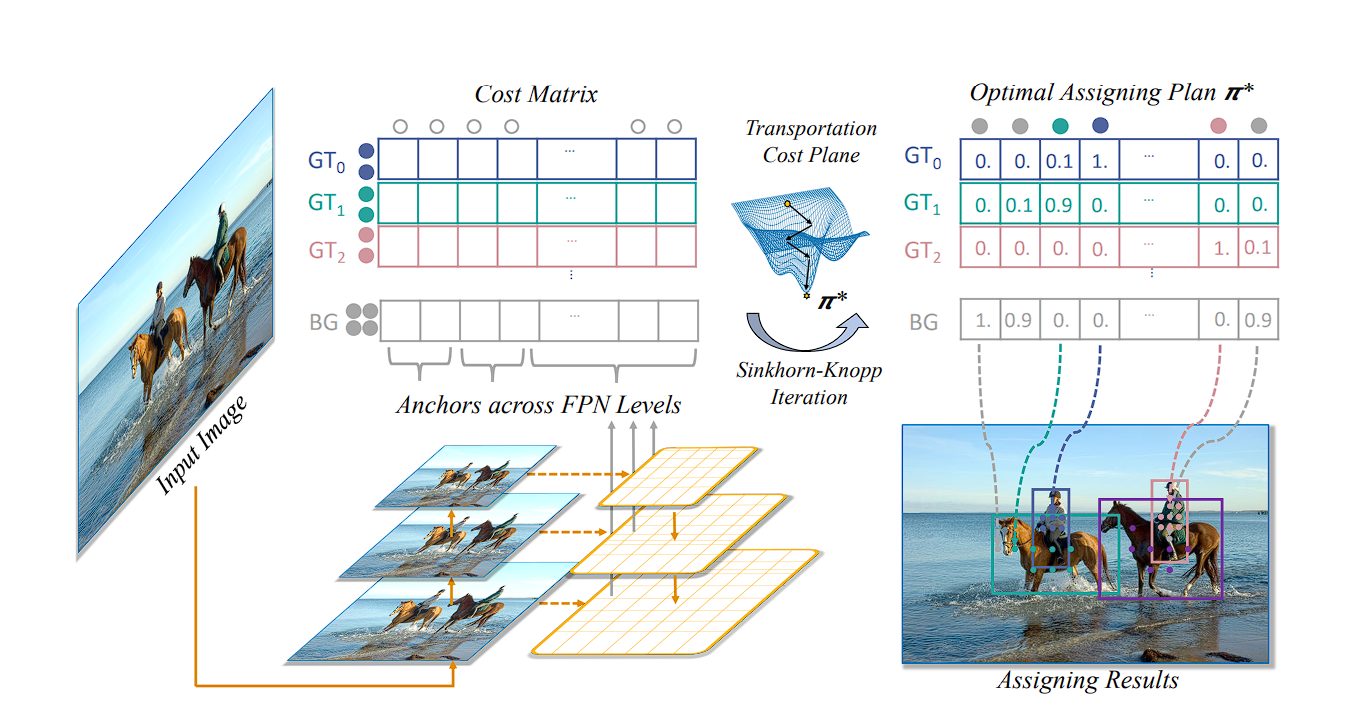

simOTA Label Assignment Strategy

During training, the object detector model generates many predictions for objects in an image, assigning a confidence value to each prediction. SimOTA dynamically identifies which predictions correspond to actual objects (positive labels) and which don’t (negative labels) by finding the best label.

Traditional methods like IoU take a different approach. Here, each predicted bounding box is compared to a ground truth object based on its Intersection over Union (IoU) value. A prediction is considered a good one (positive) if its IoU with a ground truth box exceeds a certain threshold, typically 0.5. Conversely, predictions with IoU below this threshold are deemed poor predictions (negative).

The SimOTA approach not only reduces training time but also improves model stability and performance by ensuring a more accurate and context-aware assignment of labels.

An important thing to note is that simOTA is performed only during training, not during inference.

Advanced-Data Augmentations

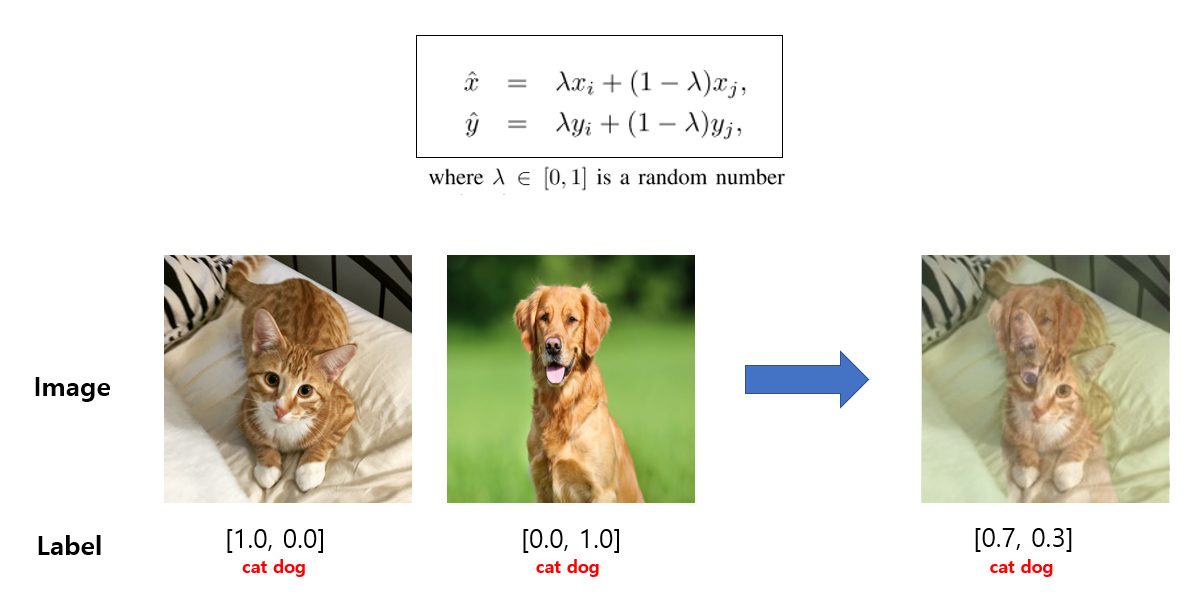

YOLOX leverages two powerful data augmentation techniques:

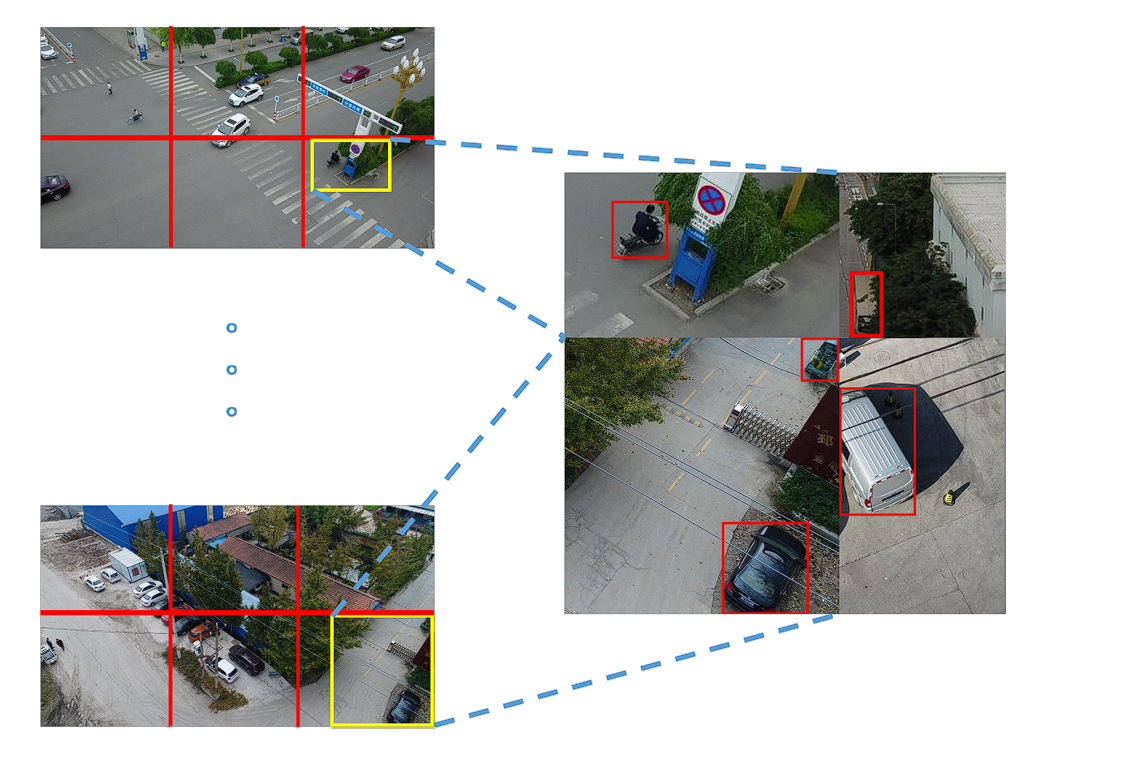

- MosaicData augmentation: This technique randomly combines four training images into a single image. By creating these “mosaic” images, the model encounters a wider variety of object combinations and spatial arrangements, improving its generalization ability to unseen data.

- MixUp Data Augmentation: This technique blends two training images and their corresponding labels to create a new training example. This “mixing up” process helps the model learn robust features and improve its ability to handle variations in real-world images.

Performance and Benchmarks of YOLOX

YOLOX, with its decoupled head, anchor-free detection design, and advanced label assignment strategy, achieves a score of 47.3% AP (Average Precision) on the COCO dataset. It also comes in different versions (e.g., YOLOX-s, YOLOX-m, YOLOX-l) designed for different trade-offs between speed and accuracy, with YOLOX-Nano being the lightest variation of YOLOX.

| Models | AP (%) | Parameters | GLOPs | Latency |

|---|---|---|---|---|

| YOLOv5-S | 36.7 | 7.3 M | 17.1 | 8.7 ms |

| YOLOX-S | 39.6 (+2.9) | 9.0 M | 26.8 | 9.8 ms |

| YOLOv5-M | 44.5 | 21.4 M | 51.4 | 11.1 ms |

| YOLOX-M | 46.4 (+1.9) | 25.3 M | 73.8 | 12.3 ms |

| YOLOv5-L | 48.2 | 47.1 M | 115.6 | 13.7 ms |

| YOLOX-L | 50.0 (+1.8) | 54.2 M | 155.6 | 14.5 ms |

| YOLOv5-X | 50.4 | 87.8 M | 219.0 | 16.0 ms |

| YOLOX-X | 51.2 (+0.8) | 99.1 M | 281.9 | 17.3 ms |

YOLOX benchmark – source.

All the YOLO model scores are based on the COCO dataset and tested at 640 x 640 resolution on Tesla V100. Only YOLO-Nano and YOLOX-Tiny were tested at a resolution of 416 x 461.

| Models | AP (%) | Parameters | GLOPs |

|---|---|---|---|

| YOLOv4-Tiny [30] | 21.7 | 6.06 M | 6.96 |

| PPYOLO-Tiny | 22.7 | 4.20 M | – |

| YOLOX-Tiny | 32.8 (+10.1) | 5.06 M | 6.45 |

| NanoDet3 | 23.50 | 0.95 M | 1.20 |

| YOLOX-Nano | 25.3 (+1.8) | 0.91 M | 1.08 |

YOLOX lighter models benchmark – source.

What is AP?

In object detection, Average Precision (AP), also known as Mean Average Precision (mAP), serves as a key benchmark. A higher AP score indicates a better-performing model. This metric allows us to directly compare the effectiveness of different object detection models.

How does AP work?

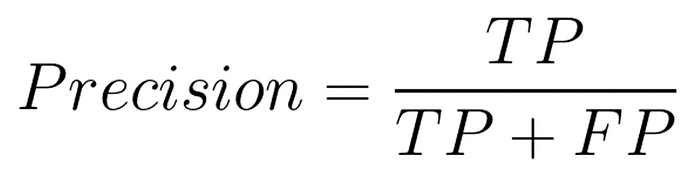

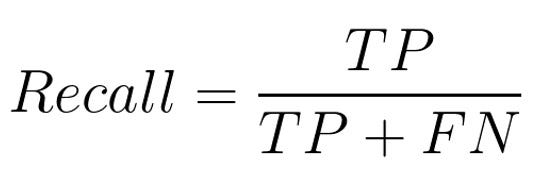

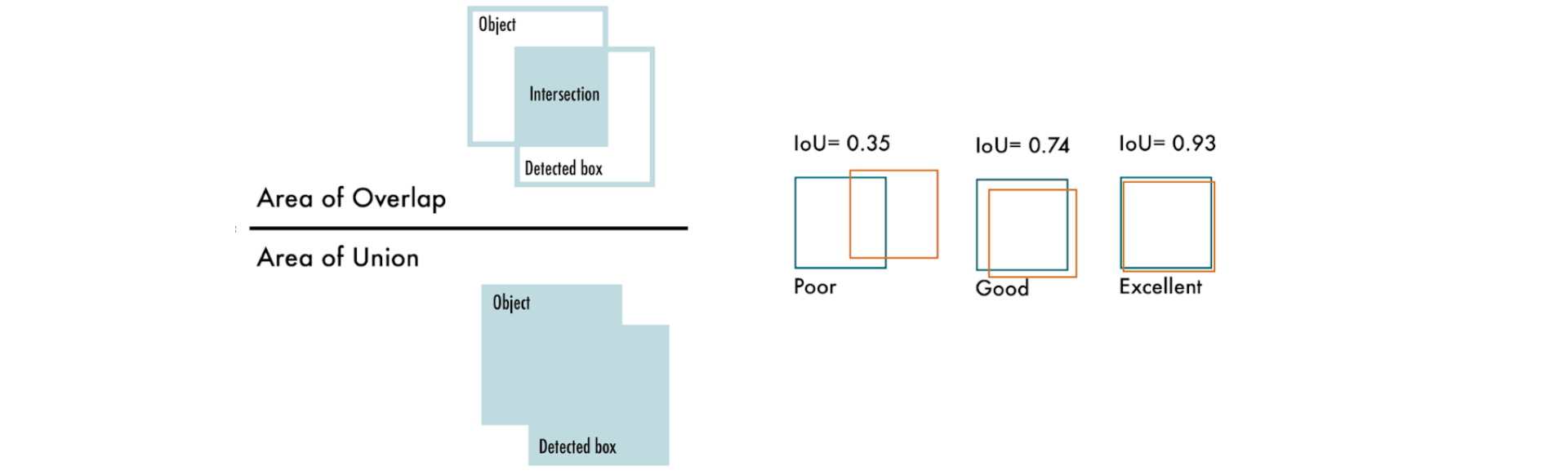

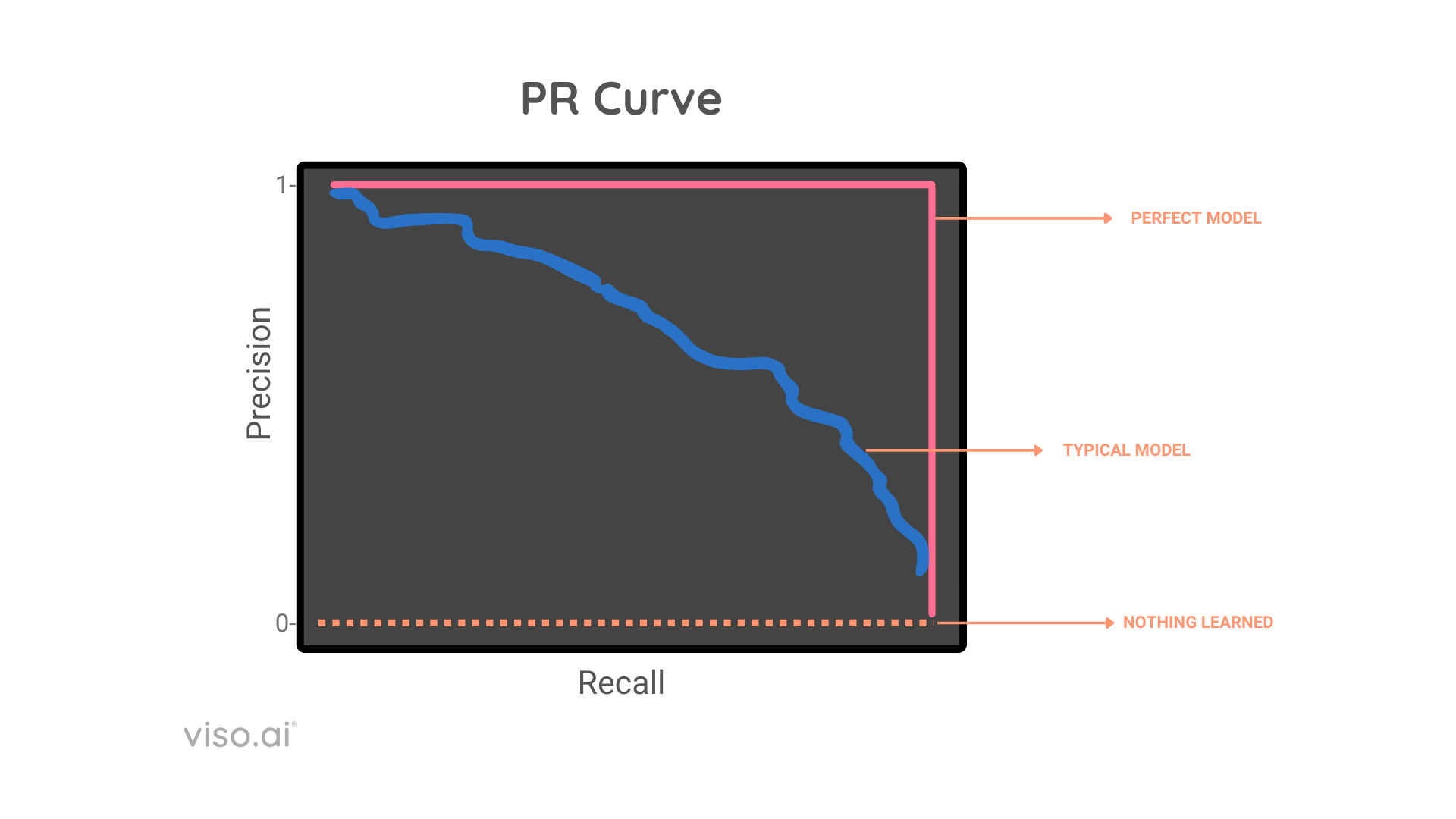

AP summarizes the Precision-Recall Curve (PR Curve) for a model into a single number between 0 and 1, calculated on several metrics like intersection over union (IoU), precision, and recall.

There exists a tradeoff between precision and recall, AP handles this by considering the area under the precision recall curve, and then it takes each pair of precision and recall, and averages them out to get mean average precision mAP.

- Precision: This refers to the proportion of correctly classified positive cases (True Positives) out of all the cases the model predicts as positive (True Positives + False Positives). It denotes how accurate your model is

- Recall: Recall represents the proportion of correctly identified positive cases (True Positives) out of all the actual positive cases present in the data (True Positives + False Negatives). Recall reflects if the model is complete or not (doesn’t leave out the correct values).

- Intersection over Union(IoU): IoU is a measure of how well a predicted bounding box overlaps with the ground truth bounding box for an object.

Intersection over Union – source

How To Choose The Right Model?

The question of whether you should use YOLOX in your project, or application comes down to several key factors.

- Accuracy vs. Speed Trade-off: Different versions of YOLO offer varying balances between detection accuracy and inference speed. For instance, later models like YOLOv8 and YOLOV9 improve accuracy and speed, but since are new, they lack community support.

- Hardware Constraints: Hardware is a key factor when choosing the right YOLO model. Some versions of YOLOX, especially the lighter models like YOLOX-Nano, are optimized for smartphones, however, they offer lower AP%.

- Model Size and Computational Requirements: Evaluate the model size and the computational complexity (measured in FLOPs – Floating Point Operations Per Second) of the YOLO version you’re considering.

- Community Support and Documentation: Given the rapid development of the YOLO family, it’s crucial to consider the level of community support and documentation available for each version. A well-supported model with comprehensive documentation and an extensive community is crucial.

Applications of YOLOX Architecture

YOLOX is capable of object detection in real-time, makes it a valuable tool for various practical applications, including:

- Real-time object detection: YOLO’s real-time object detection capabilities have been invaluable in autonomous vehicle systems, enabling quick identification and tracking of various objects such as vehicles, pedestrians, bicycles, and other obstacles. These capabilities have been applied in numerous fields, including action recognition in video sequences for surveillance, sports analysis, and human-computer interaction.

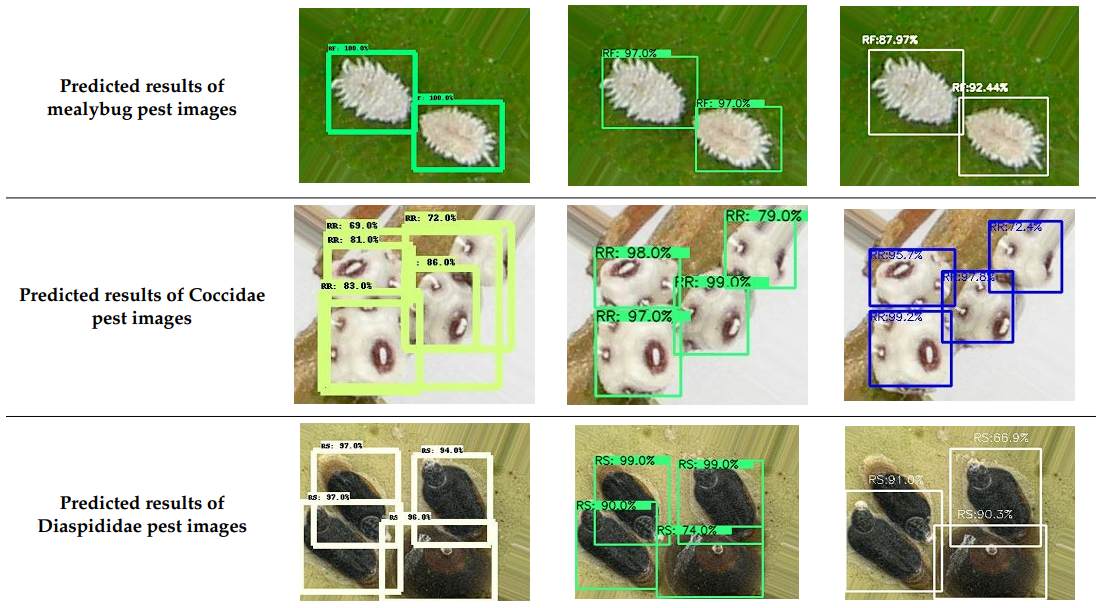

- Agriculture Industry: YOLO models have been used in agriculture to detect and classify crops, pests, and diseases, assisting in precision agriculture techniques and automating farming processes.

object-detection-agriculture –source

- Medical and Health Industry: In the medical field, YOLO has been employed for cancer detection, skin segmentation, and pill identification, leading to improved diagnostic accuracy and more efficient treatment processes.

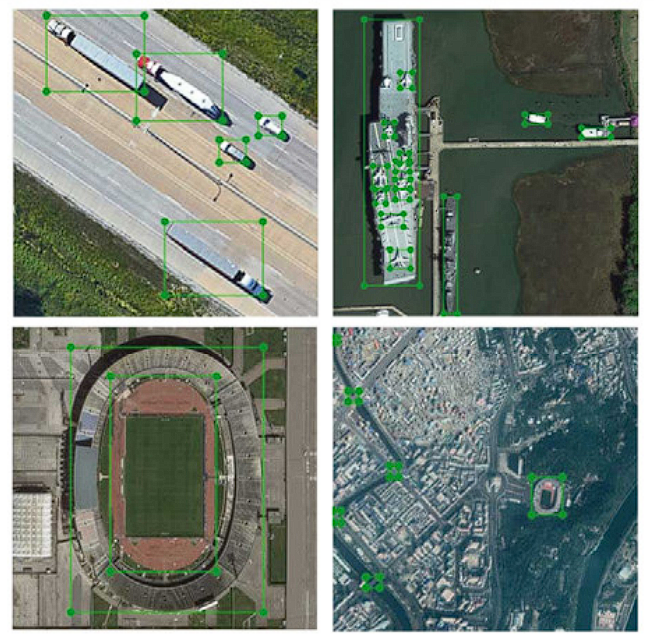

- Remote sensing: YOLO has been used for object detection and classification in satellite and aerial imagery, aiding in land mapping, urban planning, and environmental monitoring.

Remote Sensing – source - Traffic Application: YOLO can be utilized for tasks such as license plate detection and traffic sign recognition, contributing to the development of intelligent transportation systems and traffic management solutions.

Traffic detection application – source - Retail industry (inventory management, product identification): YOLOX can be used in stores to automate inventory management by identifying and tracking products on shelves. Customers can also use it for self-checkout systems where they scan items themselves.

Challenges and Future of YOLOX

- Generalization across Diverse Domains: Although YOLOX performs well on a variety of datasets, its performance can still vary depending on the specific characteristics of the dataset it is trained on. Fine-tuning and customization are necessary to achieve optimal performance on datasets with unique characteristics, such as uncommon object sizes, densities, or highly specific domains.

- Adaptation to New Classes or Scenarios: YOLOX is capable of detecting multiple object classes however, adapting the model to new classes or significantly different scenarios requires training data, which is often a difficult task to perform correctly.

- Handling of Extremely Small or Large Objects: Despite improvements over its predecessors, detecting extremely small or large objects within the same image can still pose challenges for YOLOX. This is a common limitation of many object detection models, which may require specialized architectural tweaks or additional processing steps to address effectively.