The developers of Detectron2 are Meta’s Facebook AI Research (FAIR) team, who have stated that “Our goal with Detectron2 is to support the wide range of cutting-edge object detection and segmentation models available today, but also to serve the ever-shifting landscape of cutting-edge research.”

Detectron2 is a deep learning model built on the PyTorch framework, which is said to be one of the most promising modular object detection libraries being pioneered. Meta has suggested that Detectron2 was created to help with the research needs of Facebook AI under the aegis of FAIR teams – that said, it has been widely adopted in the developer community, and is available to public teams.

Generally speaking, there are lots of notable applications for this kind of technology. There are several different toolsets for this type of object recognition, but Detectron2 is one of the most popular.

Detectron2: What’s Inside?

Structure

Detectron2 is written in PyTorch, whereas the initial Detectron model was built on Caffe2. Developer teams have noted that Caffe2 and Pytorch have now merged, making that difference sort of moot. Additionally, Detectron2 offers several backbone options, including ResNet, ResNeXt, and MobileNet.

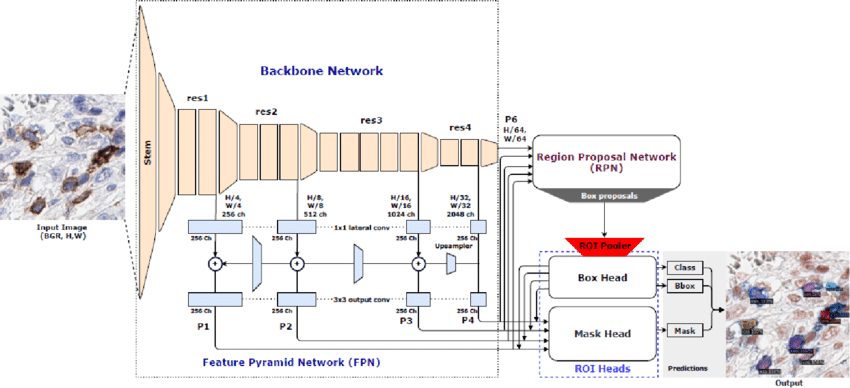

The three main structures to point out in the Detectron2 architecture are as follows:

- Backbone Network: The Detectron2 backbone network extracts feature maps at different scales from the input image.

- Regional Proposal Network (RPN): object regions are detected from multi-scale features.

- ROI Heads: Region of Interest heads process feature maps generated from selected regions of the image. This is done by extracting and reshaping feature maps based on proposal boxes into a variety of fixed-size features, refining box positions, and classification outcomes through fully connected layers.

As the image is downsampled by successive CNN layers, the features stay stable, and task-specific heads help to generate outputs. R-CNNs use items like bounding boxes to delineate parts of an image and help with object detection. Detectron2 supports what’s called two-stage detection, and is good at using training data to build model capabilities for this kind of computer vision. As a practical source of image processing capabilities, Detectron2 pioneers the practice of collecting image datasets. It then teaches the model to “look” at them and “see” things.

Key Features

- Modular Design: Detectron2’s modular architecture allows users to easily experiment with model architectures, loss functions, and training techniques.

- High Performance: Detectron2 achieves state-of-the-art performance on various benchmarks, which include COCO dataset (Common Objects in Context), LVIS (Large Vocabulary Instance Segmentation) – with over 1.5 million object instances and 250,000 examples of key point data.

- Support for Custom Datasets: The Detectron2 framework provides tools for working with custom datasets.

- Pre-trained Models: Detectron2’s model zoo comes with a collection of pre-trained models for each computer vision task supported (see the full list for each computer vision task below).

- Efficient Inference: Detectron2 includes optimizations for efficient inference, meaning that it performs well for deployment in production environments with real-time or low-latency requirements.

- Active Development Community: Due to its open-source nature, Detectron2 has an active development community with contributions from users around the world.

Detectron2 Computer Vision Tasks

The Detectron2 model can perform several computer vision tasks, including:

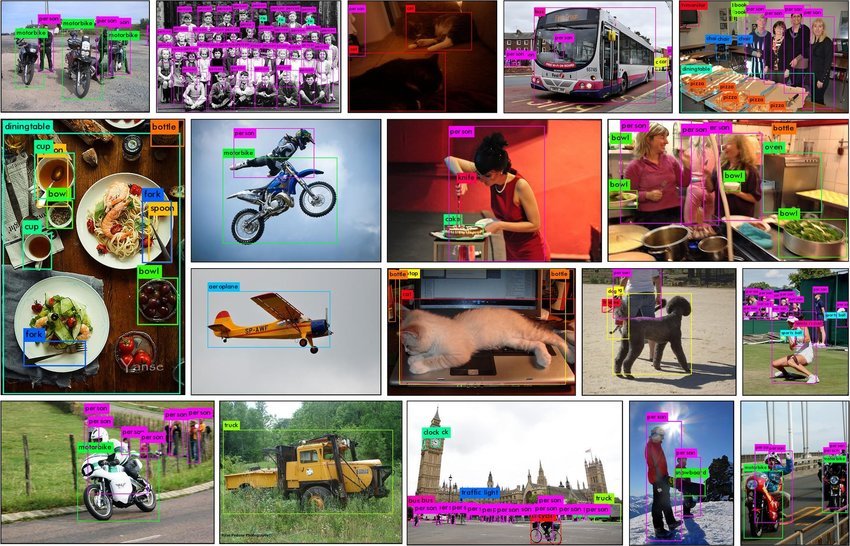

- Object Detection: identifying and localizing objects with bounding boxes.

- Semantic Segmentation: assigning each pixel in an image a class label for precise delineation and understanding of a scene’s objects and regions.

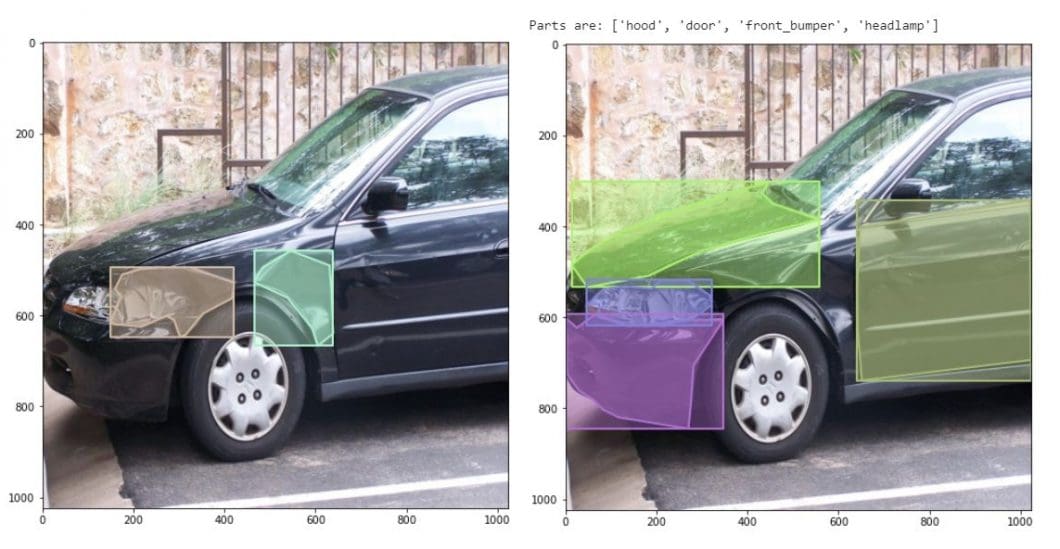

- Instance Segmentation: identifying and delineating individual objects within an image, assigning a label to each instance, and outlining boundaries.

- Panoptic Segmentation: combining semantic and instance segmentation, providing a more in-depth analysis of the scene by labeling each object instance and background regions by class.

- Keypoint Detection: identifying and localizing points or features of interest within an image.

- DensePose Estimation: assigning dense relations between points on the surface of objects and pixels in an image for a detailed understanding of object geometry and texture.

Below is a list of pre-trained models for each computer vision task provided in the Detectron2 model zoo.

| Object Detection | Instance Segmentation | Semantic Segmentation | Panoptic Segmentation | Keypoint Detection | DensePose Estimation |

|---|---|---|---|---|---|

| Faster R-CNN | DeepLabv3+ | Mask R-CNN | Panoptic FPN | Keypoint R-CNN | DensePose R-CNN |

| TridentNet | PointRend | ||||

| RetinaNet |

Open-Source

The open nature of Detectron2 as a technology means it can be on display at hackathons, on GitHub, and everywhere where developers show off their projects. As an open-source technology, the object classifier represents one of many new AI advances that are front and center right now as individual scientists and teams make diverse use of AI to explore what’s possible today.

Using the CNN, labeled data, and specific classification targeting, Detectron2 involves the image detection technology designed by Meta at a time when this technology is taking off in leaps and bounds.

Detectron2 Training Techniques

In developing projects with Detectron2, it’s useful to look at how developers typically work. One of the first steps is registering and downloading training data and getting it into the system. Another key task is addressing dependencies related to Detectron2.

Returning to the training data, it’s often useful to load the COCO dataset and work from there. Developers will want to figure out how to visualize training data: open source tools like FiftyOne can also help develop an overall training data strategy. Some seasoned users also point out the value of class handling and validation to prevent class imbalance. In other words, to be sure that each desired class for a project is represented in the training dataset, and represented well!

Later, there’s the imperative of performance evaluation and experiment tracking. So, how can you evaluate the results of a Detectron2 project? One resource is COCOevaluator, which computes the average precision of model results. It’s also possible to parse the inputs and outputs manually to get an idea of how well Detectron2 worked. Code samples are available on GitHub, useful for someone who is starting with Detectron2 to look at multiple examples.

Part of the process involves trial and error: it’s also helpful to look at how the model treats different types of images (people, animals, objects, etc.) regardless of what particular data set will be the focus of a given project.

Starting With Detectron2

Step 1: Install Dependencies

- Install PyTorch: using a pip package with specific versions depending on system and hardware requirements.

- Install Torchvision: using a pip package as well.

Step 2: Install Detectron2

- Clone the Detectron2 GitHub repository: use Git to clone the Detectron2 repository from GitHub to receive access to source code and latest updates. Clone the Detectron2 GitHub repo using the following command:

git clone https://github.com/facebookresearch/detectron2.git - Or, install the pip package: installing the pip package will ensure that you’re using the latest version.

Step 3: Set Up the Dataset

- Prepare dataset: Organize the dataset into the required structure and format compatible with Detectron2’s data-loading utilities.

- Convert data to Detectron2 format

Step 4: Create Config File

- Customize config file: define model architecture (Faster R-CNN, Mask R-CNN), specify hyperparameters (e.g., learning rate, batch size), and provide paths to dataset and other necessary resources.

- Fine-tune configurations: based on your requirements.

Step 5: Training or Inference

- Train model: use configured settings and dataset to train a new model from scratch.

- Perform inference: generate predictions, such as object detections or image segmentations, depending on the task.

Step 6: Evaluation and Fine-tuning

Evaluate model performance to inform fine-tuning and adjusting of hyperparameters as needed.

Step 7: Deployment

Deploy Detectron2 in your application or system and ensure that the deployment environment meets hardware and software requirements for running the models efficiently.

Community Resources

Detectron2’s open-source nature gives many people opportunities to use this type of object detection interface for a wide variety of applications. Part of what’s exciting about Detectron2 is the still-emerging capability to use these segmentation tools on your data.

FAIR provides some more resources for Detectron2 users and explanations of how to approach its use for relevant projects. Tutorials and explainers can also be helpful. As for additional data set options, sources include Cityscapes, LVIS, and PASCAL VOC.

At the end of the day, Detectron2 remains a powerful resource for object detection programs and projects that involve this capability in broader designs. Meta’s pioneering contributes to the common development community in this way, and everyone gets to benefit from independent use cases. In this field of image processing, this is one component to watch.

Detectron 2 Real-World Applications

Practically speaking, the real-world applications of Detectron2 are nearly endless.

- Autonomous Driving: In self-driving car systems or semi-supervised models, Detectron2 can help to identify pedestrians, road signs, roadways, and vehicles with precision. Tying object detection and recognition to other elements of AI provides a lot of assistance to human drivers. It does not matter whether fully autonomous driving applications are “ready for prime time.”

- Robotics: A robot’s capabilities are only as good as its computer vision. The ability of robots to move pieces or materials, navigate complex environments, or achieve processing or packaging tasks has to do with what the computer can take individually from image data. With that in mind, Detectron2 is a core part of many robotics frameworks for AI cognition that make these robots such effective helpers.

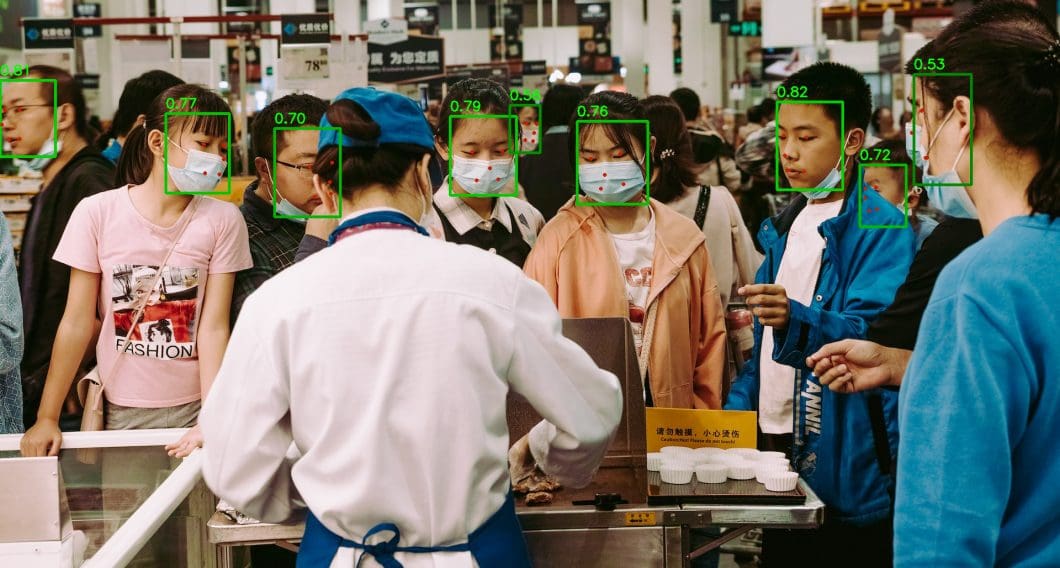

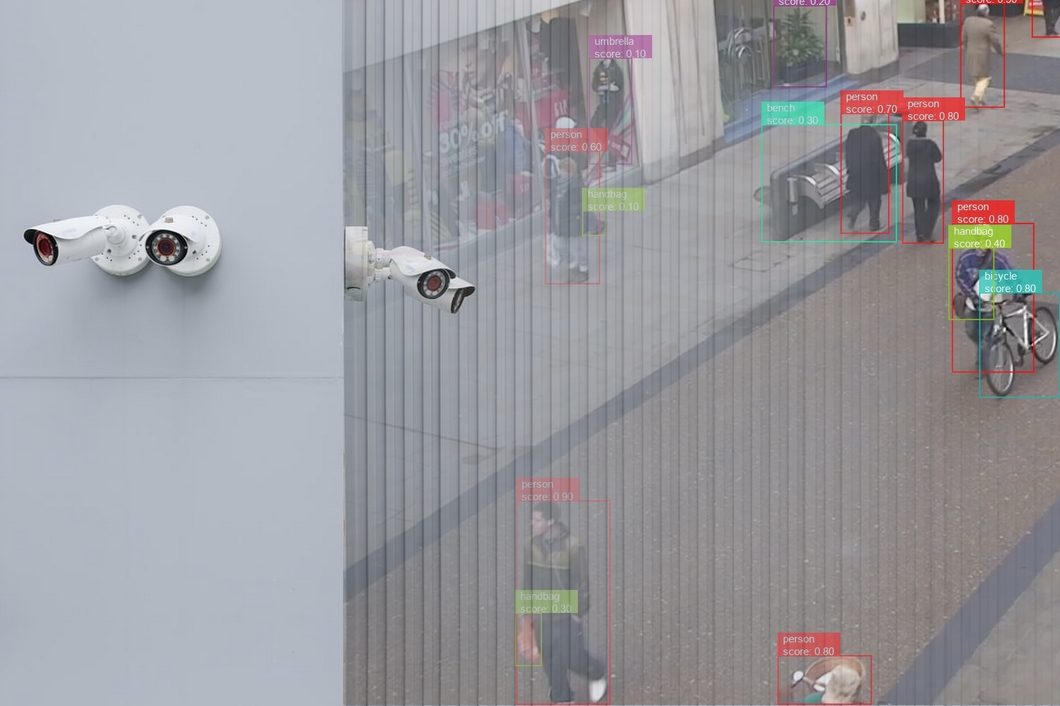

- Security: As you can imagine, Detectron2 is also critically helpful in security imaging. A major part of AI security systems involves monitoring and filtering through image data. The better AI can do this, the more capable it is in identifying threats and suspicious activity.

- Safety Fields: In safety fields, Detectron2 results can help to prevent emergencies, for example, in forest fire monitoring, or flood research. Then, generally, there are a lot of maritime industry applications, and biology research applications, too. Object detection technology helps researchers to observe natural systems and measure goals and objectives.

- Education: Detectron2 can be a powerful example of applying AI in education to teach its capabilities. As students start to understand how AI entities “see” and start to understand the world around them, it prepares them to live in a world where we increasingly coexist and interact with intelligent machines.

What’s Next With Detectron2?

To recap, Detectron2 uses a specific kind of region-based CNN with algorithmic tools to process objects in an image. The use of the pre-supplied COCO data set takes some of the design guesswork out of the equation. This also eliminates some of the labor-intensive processes needed to generate and work with training sets. Many other sets can be used to train one of these models, and developers are exploring all kinds of pre-built and proprietary libraries.

A wide community of users exists for support, bug-fix assistance, and use cases on platforms like Detectron2 GitHub and StackOverflow. Keep an eye on this type of intelligent model as the community continues to explore its potential.

Detectron2 and similar computer vision programs also complement advances in their generative AI cousins with tools like Dall-E and ChatGPT (both from AI giant, OpenAI). Unlike these other more prominent applications, Detectron2 instead helps machines comprehend and recognize what they “see.” However, just as the generative AI phenomenon has hit its stride in the mainstream, we can expect the computer vision world to heat up as well.

To learn more about computer vision and more AI tools and frameworks from Meta, check out our other articles:

- The complete guide to Meta’s Segment Anything Model (SAM)

- Understanding Semantic vs Instance Segmentation

- The most popular object detection algorithms and AI software every ML engineer should know

- LLAMA2: an open-source large language model from Meta