In the last decade, computer vision has evolved as a key technology for numerous applications replacing human supervision and monitoring. This article provides a research overview of state-of-the-art computer vision in video surveillance and AI security monitoring.

We will discuss the following topics:

- State-of-the-art AI Video Security Technology

- Anomaly Detection and Response with Computer Vision

- AI Vision Applications in Security and Surveillance

- Starting with Computer Vision – Software Solution

About us: Viso Suite is the world’s only end-to-end Computer Vision Platform. The solution helps global organizations to develop, deploy, and scale all computer vision applications in one place. Get a demo for your organization.

State of the Art in AI Video Surveillance

Computer vision uses a combination of technologies to analyze and understand video data with computers. In surveillance and security industry applications, the primary goal of computer vision is to automate human supervision. The ability to capture and digitize real-life scenes provides new opportunities to detect threats better and earlier, quantify risk, and provide real-time security assessments.

The list of computer vision applications is expanding rapidly, driven by new Machine Learning, Edge Computing, Artificial Intelligence (AI), and IoT technologies that make AI-powered vision much more powerful, flexible, and scalable.

Edge AI for Computer Vision in Surveillance

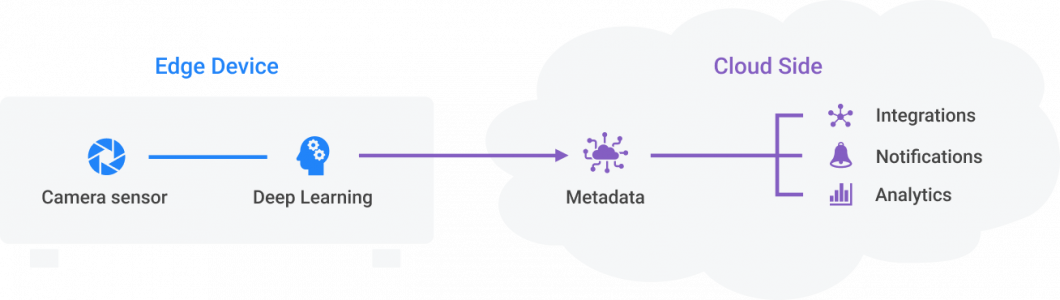

Applying computer vision has only recently become possible through advances in deep learning and edge computing. Deep learning is a subfield of machine learning that enables machines to learn from training data and apply those algorithms to new data.

Edge Computing is the systematic process of moving computing tasks from the cloud to the network edge near the data source (camera). As a result, edge computing eliminates the challenges of connected cameras and devices, such as network congestion, constant connectivity, latency, robustness, privacy, and data management.

Modern computer vision systems use edge computing to process video without sending video data to the cloud or another storage unit. The combination of on-device machine learning and edge computing is also called Edge AI or Edge Intelligence. In surveillance and security applications of computer vision, those emerging technologies play an important role in enabling real-life AI applications. Also, Edge AI vision infrastructure allows significant cost reductions in large-scale and real-time computer vision systems.

Intelligent Surveillance Cameras

With the widespread use of security cameras in public places, AI video analytics and scene understanding with computer vision have become essential features of surveillance systems. Visual data from camera streams contain rich information compared to other sources such as mobile location, GPS, radar signals, etc. Large-scale video analytics systems can collect statistical information about the status of road traffic, public places, buildings, or private areas.

Modern AI vision software can analyze video feeds from virtually any network camera. Depending on its hardware configuration, a single-edge device can process the video feed of multiple cameras. Powerful edge servers can analyze dozens to hundreds of cameras.

Some IP camera manufacturers or turnkey point solutions provide on-camera intelligence with an integration of the computing processor directly into the camera. However, enterprise systems usually separate AI computation from the camera – for several reasons.

First, businesses need to stay vendor-independent and maintain the ability to negotiate. Then, companies must avoid technology lock-in and ensure extensibility and integration with their systems. Also, cameras with integrated AI processing don’t allow scaling up hardware resources if a company needs to extend features or increase AI performance.

In addition, most businesses operate video systems with cameras from various brands, generations, and types (Sony, Panasonic, Axis, Hikvision, Dahua, Samsung, and so on). Replacing all cameras is too expensive, and standardizing would lead to lock-in costs. Also, most camera products are periodically replaced with a new model every two years.

AI Video Surveillance Systems

In traditional video surveillance, systems fully depend on human operators and individual judgment and attentivity. Intelligent AI analysis supports human operators with fast, objective, and consistent information. Depending on the use case, the AI vision software performs detection and prediction tasks for traffic congestion, security threats, accidents, and other anomalies.

A typical computer vision system integrates multiple software capabilities, from data input acquisition to image preprocessing, deep learning inference, output aggregation, communication, and visualization. Such a computer vision system can run one or multiple applications that address specific problems (anomaly detection, pose estimation, object detection, etc.).

Computer Vision Applications vs. AI Models

An AI model is not a computer vision application; the terms are often used interchangeably, albeit incorrectly. A computer vision application contains a computer vision pipeline (or flow) with one or multiple AI models.

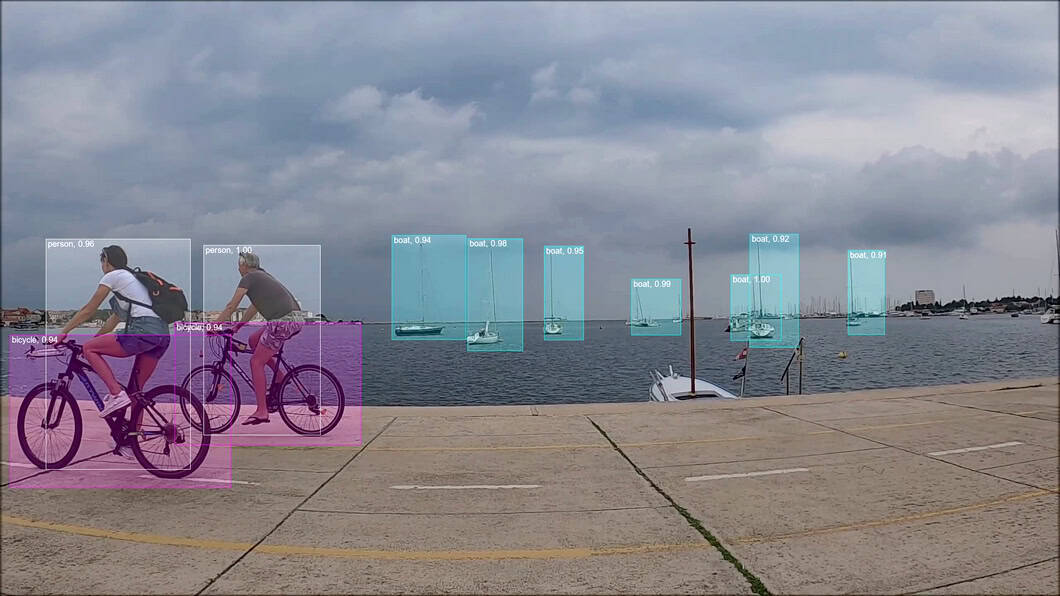

The AI model requires upstream functions to fetch and preprocess image data before feeding it into the model. Only then can an AI model perform an algorithmic task to transform video frames into specific metadata (e.g., classes of “person” with confidence). The raw model output requires interpretation and aggregation with logic to be useful for solving a business or security problem.

Anomaly Detection in AI Vision

What is Anomaly Detection?

In computer vision, anomaly detection is a sub-field of behavior understanding from surveillance scenes. Anomalies are typically aberrations of scene entities (people, vehicles, environment) and their interactions from normal behavior. Anomaly detection methods learn the normal behavior via training. Anomaly detection typically uses unsupervised or unsupervised learning, or a combination, of semi-supervised learning.

Use Cases of Anomaly Detection

Anything deviating significantly from normal behavior can be considered “anomalous.” Examples include vehicle presence on walkways, the sudden dispersal of people within a gathering, a person falling while walking, jaywalking, signal bypassing in traffic, or U-turns of vehicles at red signals.

Anomaly Detection Solution

An anomaly detection system leverages data acquisition, feature extraction, scene or activity learning, and behavioral understanding. Anomaly detection systems are architected towards specific use cases, optimized for particular deployment environments and camera positioning.

Systems for anomaly event detection are nontrivial and require a set of techniques that span multiple research fields. In general, such systems process video data to perform scene analysis using video processing techniques, vehicle and person detection and tracking, multi-camera-based techniques, intelligent event detection, and more.

Anomaly Types

In AI vision, there are different types of anomalies. The three types include point anomalies, contextual anomalies, and collective anomalies. Point anomalies include, for example, a non-moving car on a busy road or in a tunnel. Contextual anomalies could be normal in a different context.

For example, in slow-moving traffic, if a vehicle moves faster than others, what would be normal behavior in less dense situations? Collective anomalies occur when a group of instances together may cause an anomaly even though individually they may be normal, for example, a group of people dispersing within a short period.

Scope and Class of Anomalies

In the context of visual surveillance, it is common to see anomalies classified as global and local anomalies. Global anomalies can be present in a frame or segment of the video without specifying exactly where an event occurred (no localization).

Local anomalies usually happen within a specific area of the scene but may be missed by global anomaly detection algorithms. Some methods can detect both global and local anomalies.

Anomaly Detection in Security Monitoring

AI video analysis is used for anomaly detection in traffic, subway, campus, trains, boats, buildings, and public places. Examples of CCTV monitoring for anomaly detection in visual AI include stopped vehicle detection, panic detection, intrusion detection, or abnormal pedestrian activity recognition.

Applications of Video Surveillance and Security

Intelligent video surveillance includes a wide range of applications and use cases for anomaly detection, object detection and tracking, movement analysis technologies, monitoring systems, prevention, identification, and warning systems. Cooperative video surveillance enables large-scale AI vision systems that integrate numerous cameras at remote locations.

Person Detection

People detection systems use object detection algorithms (for example the popular YOLOv7) to localize people in video feeds. Hence, automated single-person and multiple-person detection are key features of intelligent video surveillance systems.

Human detection also includes crowd analysis to estimate scene density and evaluate moving object interaction in crowded and uncrowded scenes (for example, at mega events).

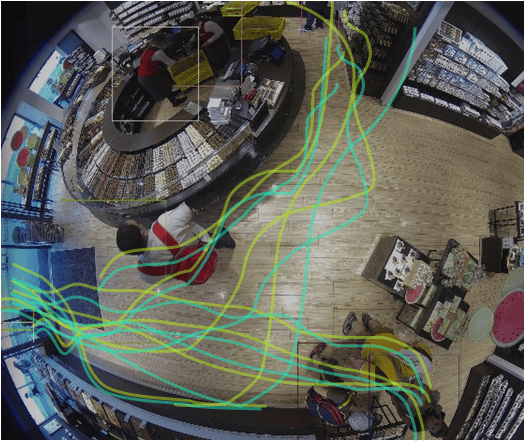

People Movement Analysis

Path learning combines human detection with path modeling techniques and clustering to perform person movement analysis. For example, in Smart City applications, movement analysis is used to perform motion prediction and analyze vehicle behavior, pedestrian behavior, acceleration, movement speed, and trajectories.

Person Recognition and Biometric Security

Modern security systems for video surveillance use facial recognition technology for automated person identification. On a high level, such biometric security technologies perform a sequence of tasks, also called AI vision pipeline, to (1) detect people, (2) crop the face area, and (3) apply image classification to compare it to images of a database.

To comply with legal requirements, face recognition algorithms require sophisticated privacy and security infrastructure. In general, facial recognition technology is sensitive because it could be used to identify people in videos and pictures without their knowledge and permission.

Note: Our technology, the Viso Suite platform provides industry-leading privacy and security capabilities for real-world computer vision. The computer vision platform enables organizations worldwide to meet the strictest privacy requirements in the most challenging environments.

Behavioral Biometrics in Computer Vision

Another alternative method of human recognition includes behavioral biometrics. Computer vision for behavioral biometrics is a modern technological research field that seeks to identify people using their habitual physical and mental traits. It has become a novel form of identity authentication as it can accurately distinguish individuals from one another without requiring any physical contact.

By analyzing subtle, individual characteristics such as facial expressions, hand gestures, body movements, and gait recognition – also called gait biometrics – this technology can detect and identify individuals. There is a wide range of applications that can be used for various purposes such as security and access control, personal recognition systems, customer service, fraud detection, deepfake detection, and general surveillance.

Human Behavior Understanding

Person detection, classification, and person tracking can perform human behavior understanding in video-based surveillance applications. Specific behavior patterns can be learned with classification models to recognize specific human actions.

This can perform aggression detection, brawl detection, robbery or theft detection, and more. Applications to analyze human behavior also include trajectory clustering using different machine learning and imaging technologies such as multi-camera detection.

Illegal Activity Detection

Human action recognition and motion pattern detection can detect suspicious activities, events, or behaviors. Popular techniques include pose estimation, 3D sensing, learning, and classification to detect violations of guidelines or the law. Illegal activities can include littering, loitering, begging, and more.

Driver and Traffic Safety Applications

Stationary or vehicle-mounted camera systems can perform different types of anomaly detection. Applications include lane departure warning, pedestrian detection, and adaptive warning systems. In-vehicle safety and alarm systems include driver monitoring, for example, seatbelt detection or gaze recognition to analyze tiredness and fatigue.

AI Security Systems

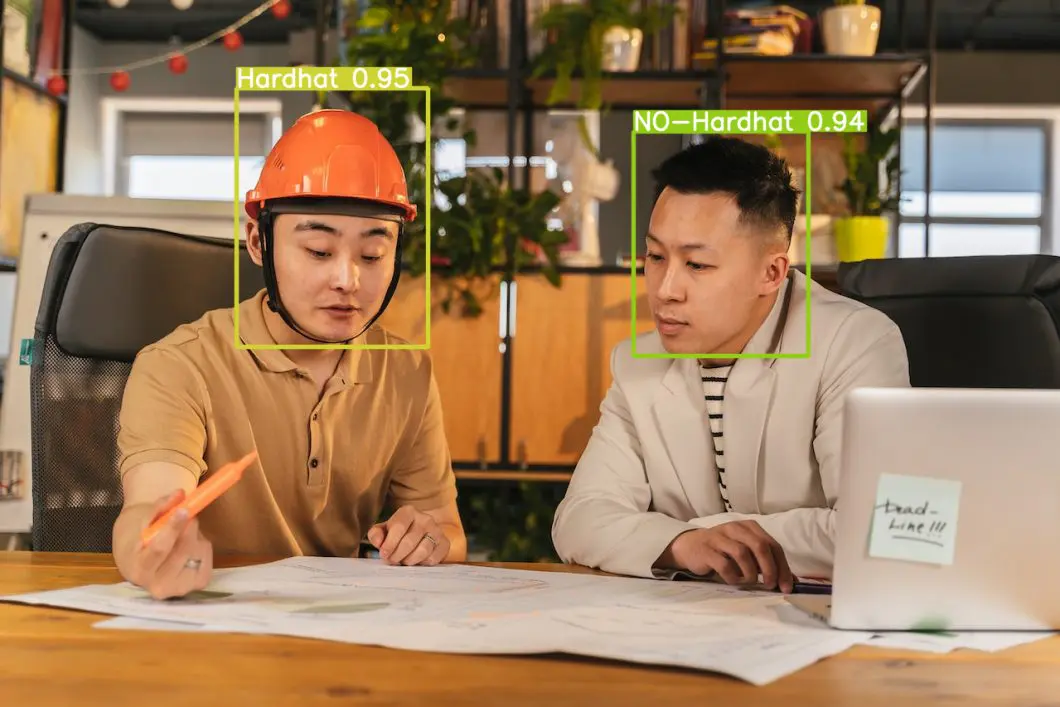

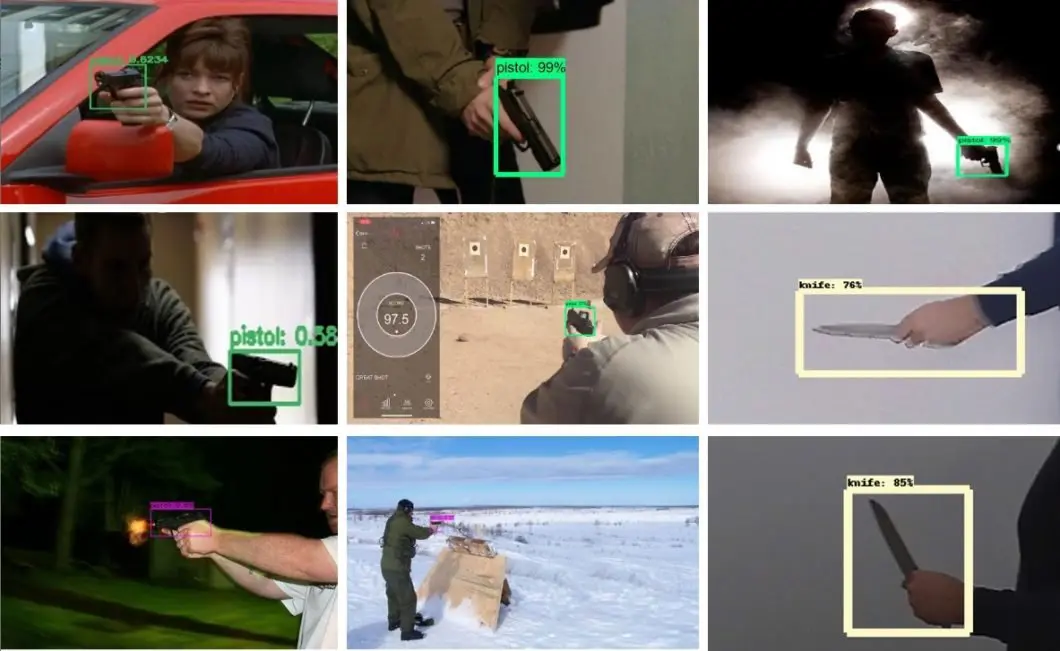

Dangerous Objects and Weapon Detection

Real-time object detection uses deep learning to detect and localize specific objects in video scenes. Common object recognition applications in security include weapon detection (firearms or knives) or protective equipment detection.

As for many computer vision applications, object detection in real-life settings is very challenging to implement; we will discuss the reasons later in this article.

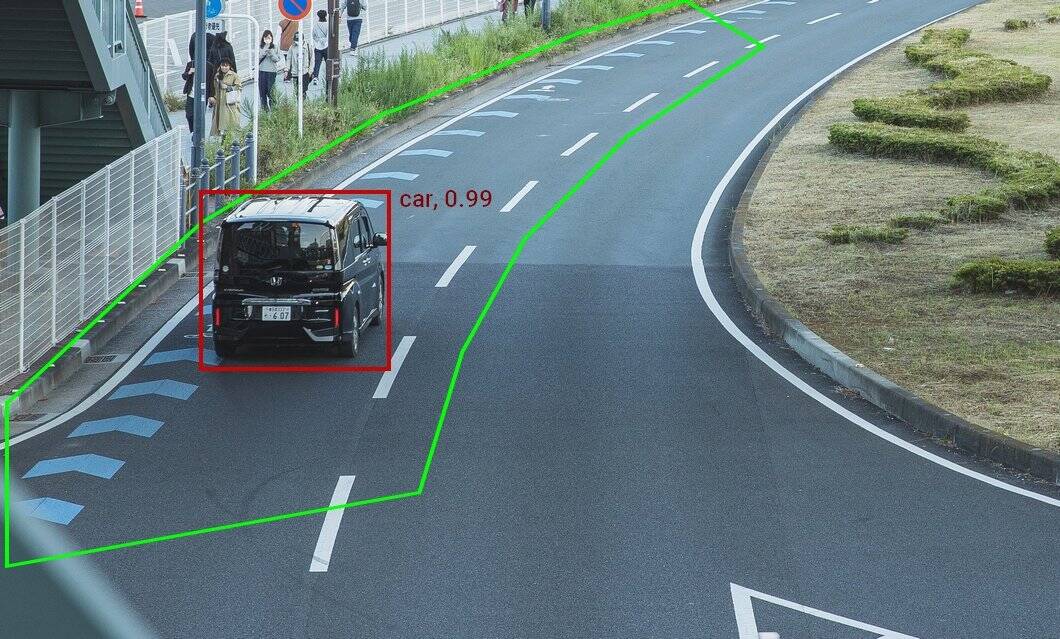

Intrusion Detection and Virtual Fencing

Virtual fencing of sensitive locations is a popular feature of AI vision surveillance systems. Specific regions of interest mark virtual fences to detect intrusion events, prompting the system to send alerts to security teams.

Automated Video Summarization

Video summarization is a process that allows people to quickly gain an understanding of the content and key messages within a video without needing to watch it in its entirety. For example, in shopping malls, fitness centers, or transportation hubs, operators spend a significant amount of time watching live and recorded video streams.

AI vision algorithms are used to perform video summarization, synopsis generation, and content-based video retrieval. In video surveillance, the historical data output of the deep learning models can be used to identify specific events and find related video material.

Infrastructure Security

Accident and Traffic Incident Detection

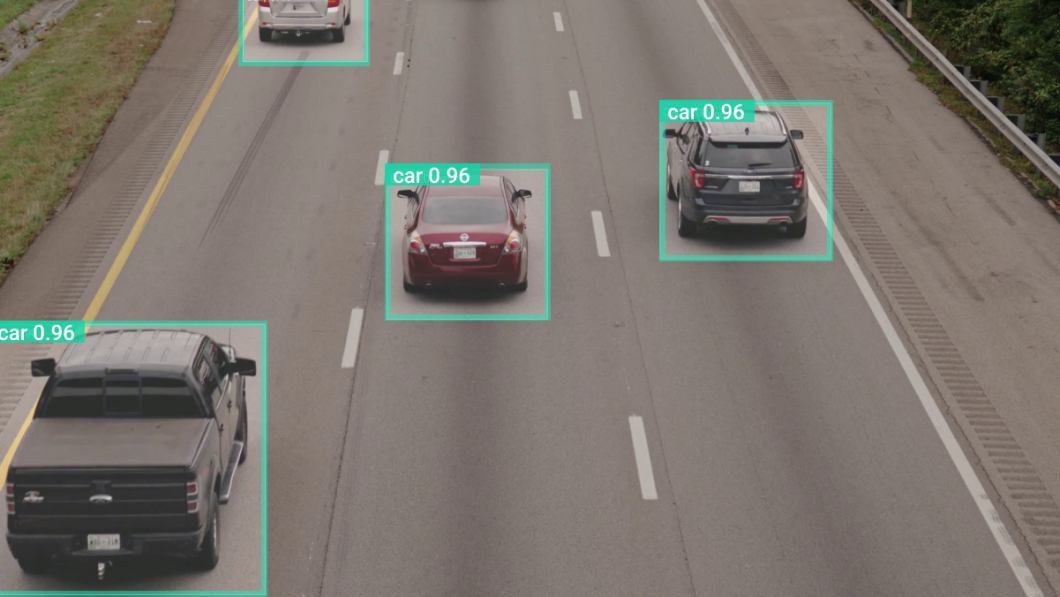

In traffic management and surveillance, vehicle detection and tracking algorithms are used to identify specific incidents and events. Such systems are popular in smart city applications, also for traffic parameter collection, vehicle counting, video-based tolling, traffic flow analysis, and behavior understanding. Other use cases include accident detection, highway vehicle detection, and vehicle classification (profiling).

Common methods apply foreground segmentation or background subtraction in combination with convolutional neural networks (CNN) for deep learning tasks.

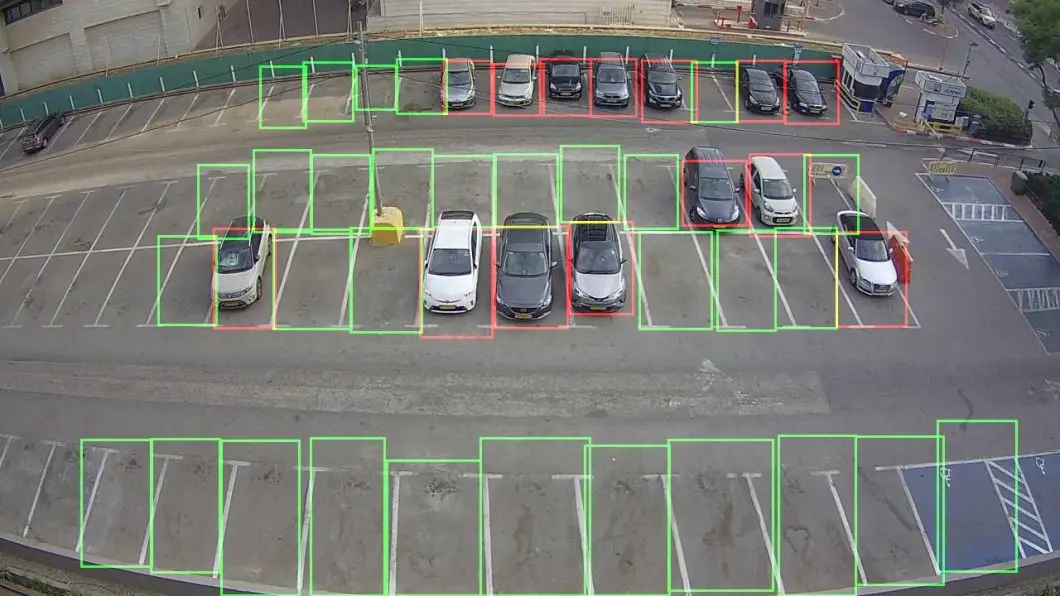

Smart Parking and Vehicle Surveillance

Vehicle or moving object detection and tracking are used in combination with license plate recognition. Image classification algorithms can determine vehicle model and type, color, or logo recognition. CCTV camera-based vehicle surveillance is popular in smart parking lot analytics to identify and track the occupancy of multiple parking spaces with computer vision.

Unattended Object Detection

Abandoned object detection in security is a critical aspect of keeping locations, properties, and safety monitoring. It involves the use of automated systems that can detect any items left behind in public or private areas without human intervention.

A deep learning model can be trained to detect items like bags, boxes, packages, and other objects that could pose potential security threats. When objects are detected, an alarm or other alert can be sent to a monitoring center or security personnel. With this alert, they perform potential threat detection before it becomes a real problem.

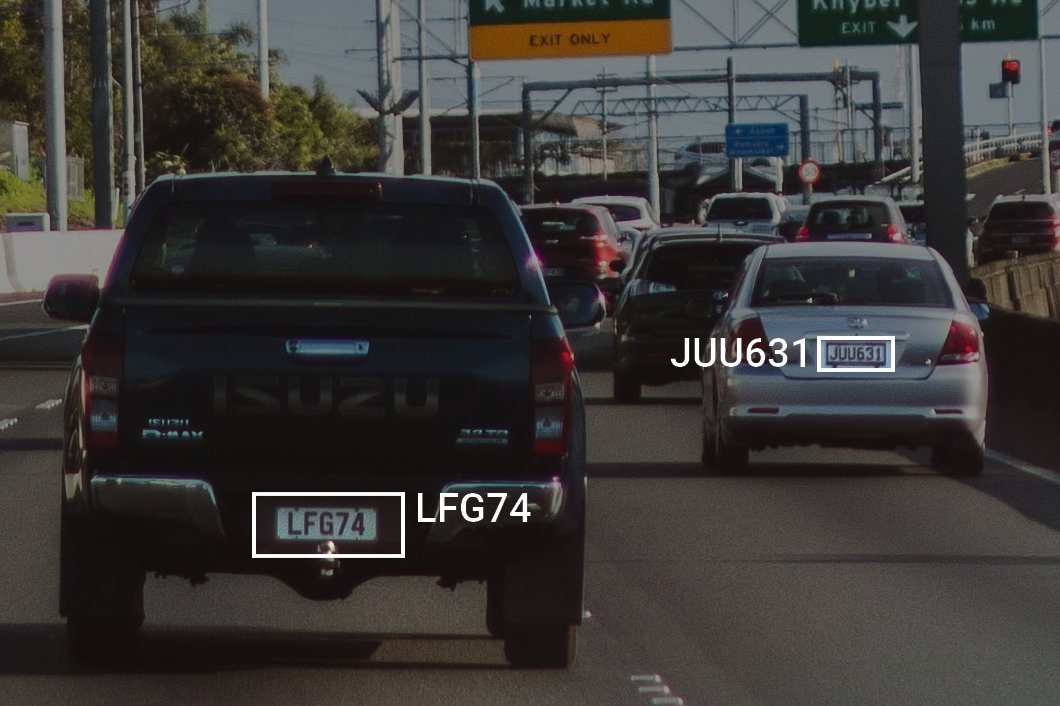

Vehicle Identification and Numberplate Recognition

Vision-based vehicle identification uses automatic number plate recognition (ANPR) and vehicle feature detection (color, type) to identify and count individual vehicles using cameras. ANPR is also called LPR (License Plate Recognition).

Vehicle identification software first detects the vehicle with object detection, locates the license plate, and finally reads the number plate using optical character recognition (OCR).

Safety Assessment

AI camera systems can implement vision-based gap analysis, threat assessment, risk, conflict, and accident detection. Deep learning models perform recognition to digitize real-world situations and gather data to model and predict threat situations.

The ability to digitize the visual world and translate it into metadata can help provide high-level security assessments, which are also important for insurance applications. For example, we can create dynamic reports that provide information about vehicle or person movements, interactions, or terrain information.

Infrastructure Security

Visual surveillance uses computer vision for roadside warning systems and decision support in the security monitoring of public places, critical infrastructure, and transportation infrastructure. Modern AI vision systems integrate with almost all CCTV monitoring and VMS systems. For example, our computer vision platform Viso Suite can acquire and process the video streams of existing cameras. This can be done across different camera models and vendors.

Emergency Management With Computer Vision

AI vision systems can perform emergency classification of natural events including storm detection, flooding detection, or smoke and fire detection. In addition, “abrupt event detection” can identify anomalies across different locations and cameras in large-scale settings. When the system detects an emergency, it can send an alert directly to law enforcement, prompting an appropriate emergency response.

Surveillance and security AI systems can also detect human-made emergencies such as road accidents, dangerous crows, weapon threat recognition, drowning person detection, and injured person or falling person detection.

Challenges of AI vision

Video-based anomaly detection in camera security systems is very challenging. Several factors make the real-life applications of computer vision very difficult to implement and scale:

- Lack of real-life data: There is a huge need for real-life data collection to train effective algorithms and build computer vision applications that perform well in real-world settings.

- Illumination: Managing variations of illumination is difficult because trained features are hard to extract from the videos.

- Pose and perspective: The camera angles that determine the surveillance area have a substantial impact on the performance of deep learning algorithms. This is because the appearance of objects or people may change depending on their distance from the camera.

- Heterogenous objects: Learning the movement of heterogeneous objects and entities in a scene can be difficult at times. The variability of appearance considerably lowers the application performance.

- Sparse vs. Dense: The methods used for detecting anomalies in sparse and dense conditions are different. Some methods are suitable for event recognition in sparse environments but can generate many false negatives in dense scene-based conditions, for example, with large crowds.

- Occlusion: Detection and tracking under occlusion with partially or fully hidden instances (people or objects) is very challenging though this task is comparably easy for humans.

Starting With Computer Vision

Computer vision is a nontrivial technology, and applications are almost always unique and require a high level of integration and configuration. Eventually, organizations are looking for ways to consolidate their computer vision systems, to overcome the sprawl of isolated point solutions, different hardware, and individual tools.

Our computer vision platform Viso Suite is the most powerful, end-to-end computer vision platform. Leading organizations worldwide use Viso technology to deliver their computer vision applications on one unified, automated infrastructure. To learn more about the solutions Viso Suite can provide for your project, book a demo with our team.