This guide shows how to develop a computer vision traffic analytics application without coding, using the enterprise AI vision platform Viso Suite. The visual programming approach helps teams to dramatically accelerate computer vision development with cross-platform deployments to the edge.

Viso ensures future-proof compatibility with any camera, industry-leading AI models, and processing on next-gen AI hardware. The platform integrates with the latest Intel technologies to process on CPU (Intel i5, i7, Xenon), Myriad X VPU such as the deep learning accelerator Intel NCS 2, or even the new Intel GPU Xe that offers efficient machine learning inference at the edge.

Video Tutorial: Build the Application with Viso Suite

Prerequisites: A Viso Workspace that provides end-to-end computer vision tools

1. Application Concept Planning

Sign in with your personal Viso account and access the Viso Workspace. Navigate to “Library > Applications” to see the list of current applications. In “Modules,” you see the building blocks that are currently installed in your workspace and available in the Viso Builder to create applications with. Viso Suite is an open platform; you can later add your custom modules and Docker containers to use within Viso Suite.

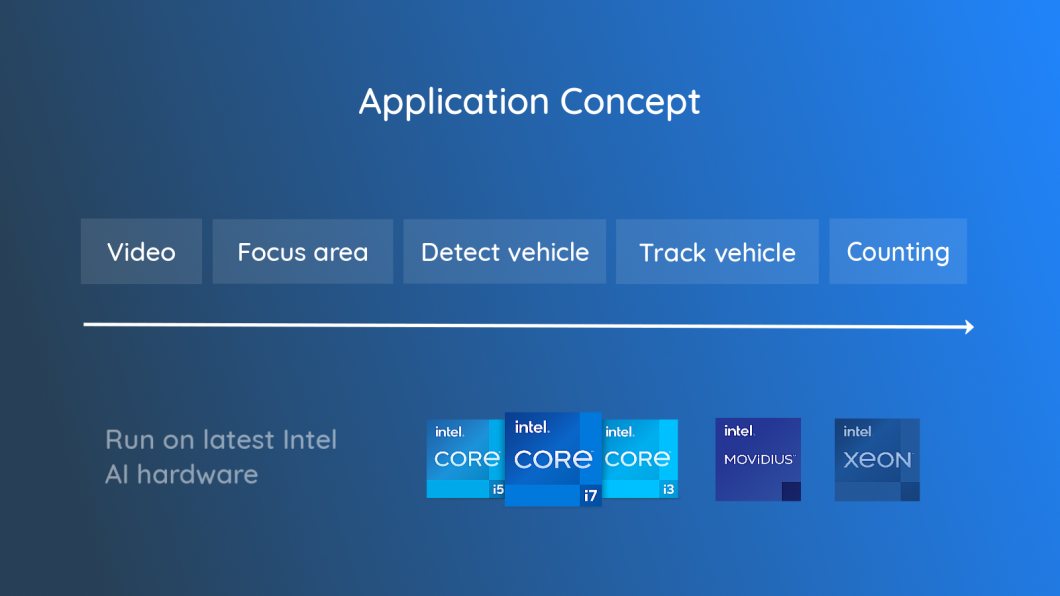

To build the traffic analytics deep learning application, we start with the computer vision system concept. Viso Suite allows running on any physical edge device and hardware. In this tutorial, we run on Intel’s latest AI edge hardware, which provides excellent performance/cost, the most important metric for large-scale deep learning systems.

The application pipeline looks like this:

2. Combine Application Modules

We combine modules to build the computer vision pipeline of the vehicle counting application. This will save a lot of time, and it will be easier to upgrade and modify the application later. For our initial version, we need the following modules:

Video-Input

The Video Input module provides the images of the video that are fed to the ML models. Viso lets you start with a video file to simulate the real-time input of a video camera (USB, IP, CCTV) with traffic data sources. You can later easily change from one file to even or multiple cameras,

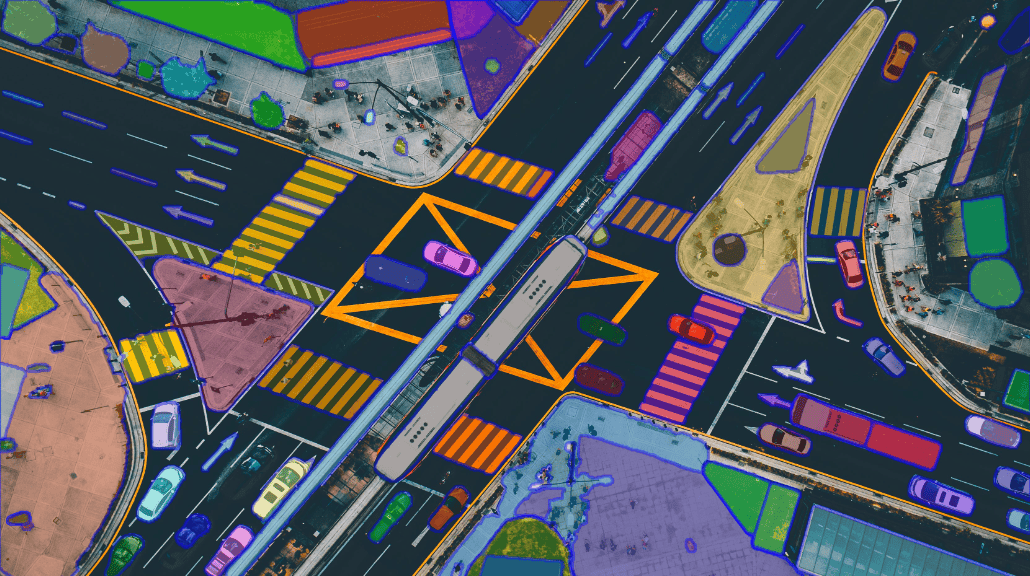

Region of Interest (RoI)

The need to focus the image processing onto a specific image region within the video frame is a typical image processing problem. We use the “Region of Interest” module to draw a focus area. The module also provides functionality to add a counting line, which indicates where and in which direction the detected vehicles should be counted.

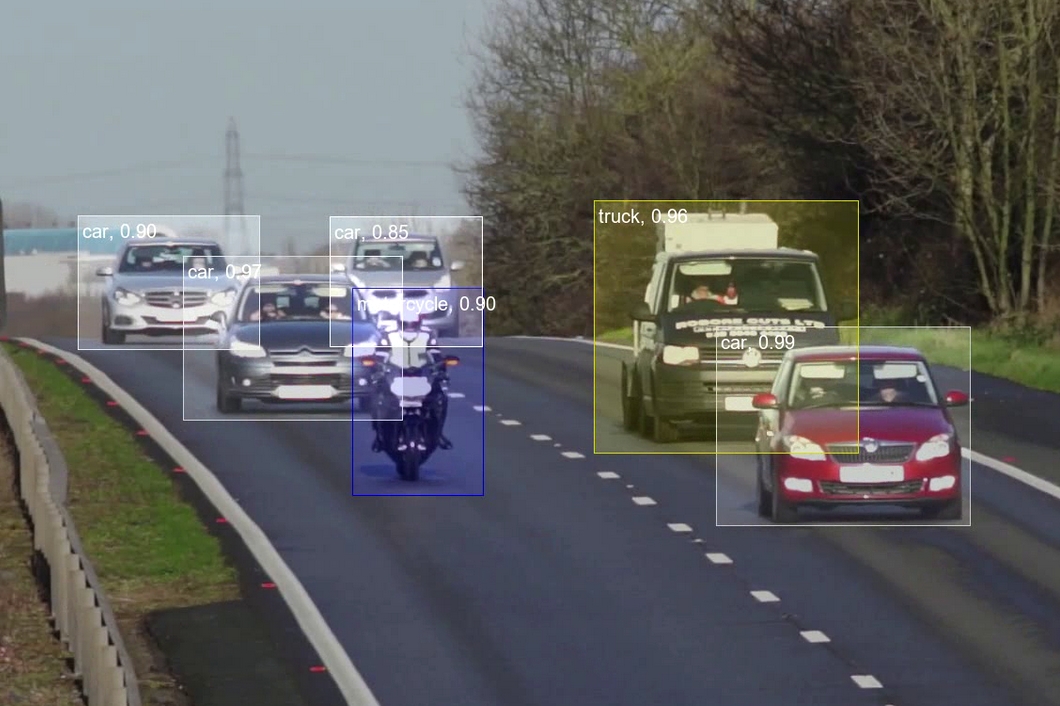

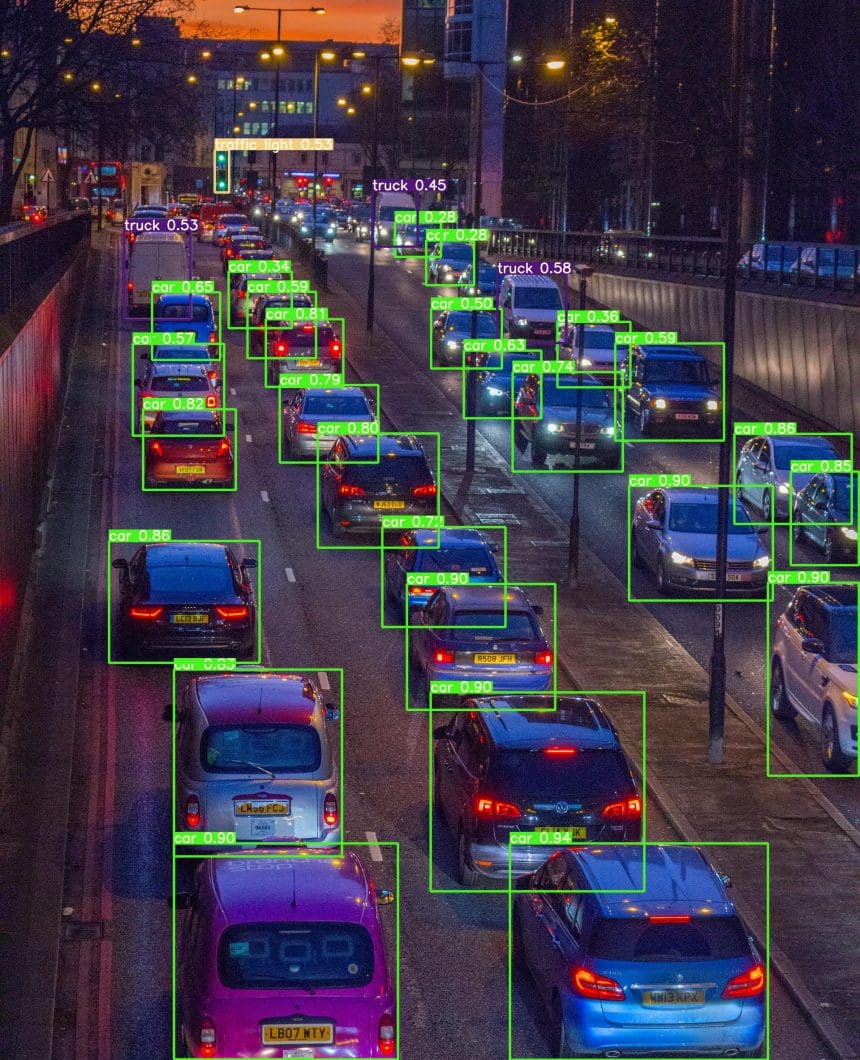

Vehicle Detection

Vehicle detection is a common computer vision problem to performs image recognition using deep learning technology. The detection module is used to recognize vehicles and their position in videos. We use the object detection module that lets you select from ML models installed in your workspace (pre-trained and custom-trained).

Multiple AI frameworks are available, including TensorFlow and PyTorch, and OpenVINO, which we will select for this tutorial. Next, we need to choose the AI model we want to use and select which trained class it should focus on (“vehicles” in our case).

Vehicle Tracking

We need to use an object tracker to solve a counting problem in computer vision. Object tracking shows the movement path of a unique object in a series of frames to determine the object’s trajectory. The built-in object tracking module tracks the objects detected by our vehicle detection module.

Counting Logic

The counting module uses the information from the vehicles detected and tracked within the region of interest. Vehicles are counted as they cross the counting line within the focus area. Because the region of interest and counting line are different for every device type, whether camera or video input, they must be specified locally.

Viso Suite provides a full local configuration system to easily set RoI and counting lines and preview the visual video output to debug and review the vision application.

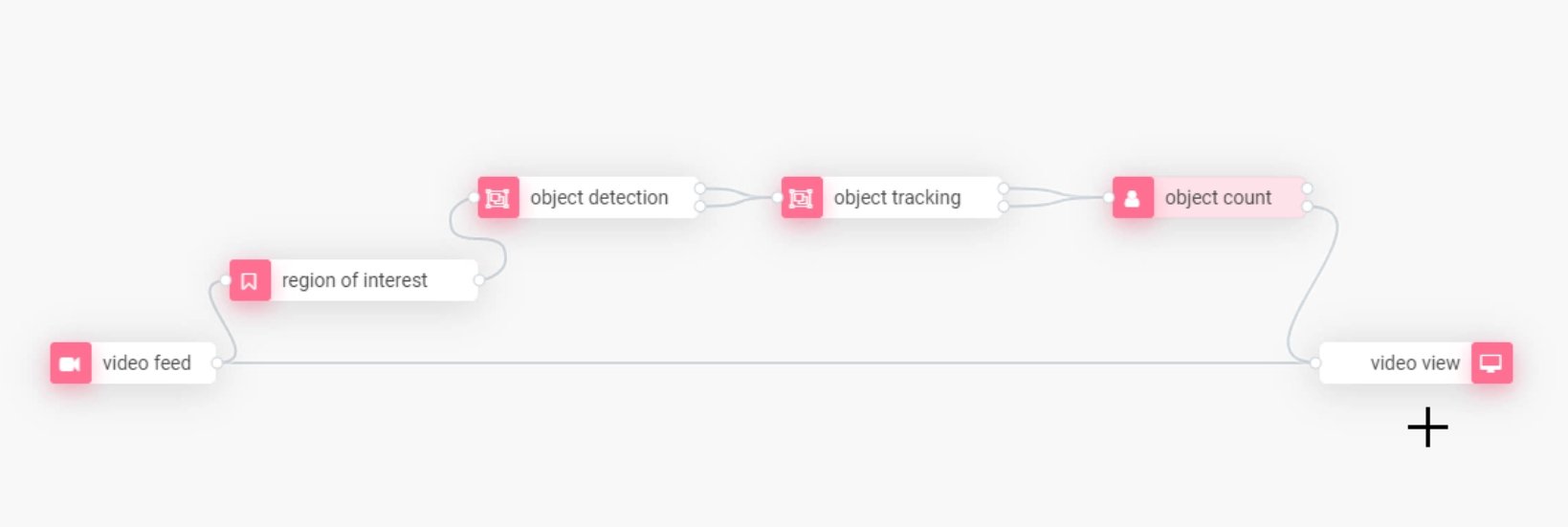

Combine the Modules

Drag the modules into the canvas and wire them together:

3. Deploy the Application

After creating the computer vision flow, proceed to save the application. Viso Suite will automatically create a version whenever you modify the application. It will also manage the application dependencies and modules (you can manage them in your Workspace Library).

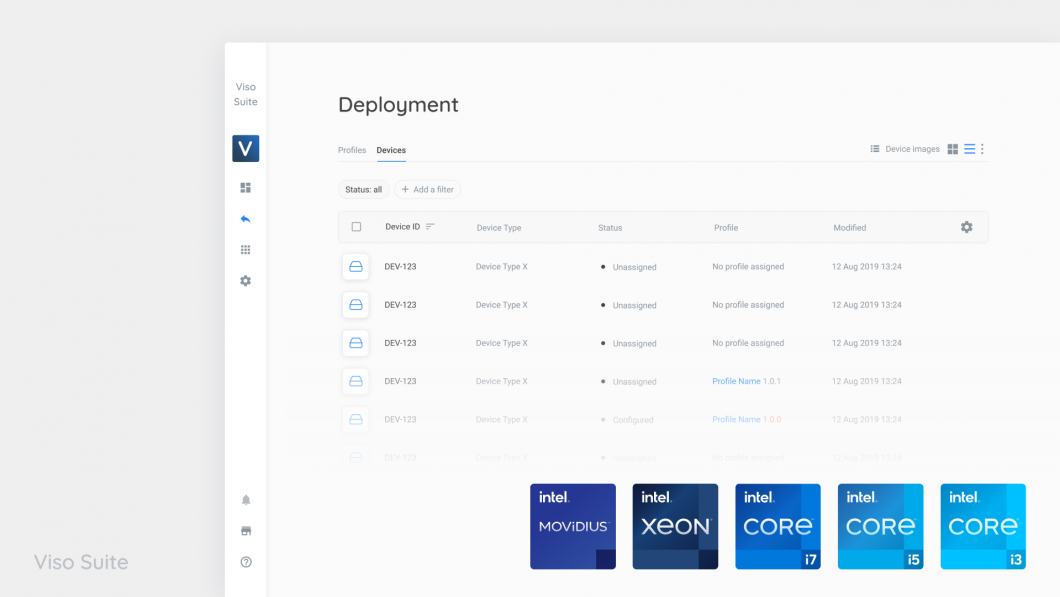

To deploy the application, add it to a new profile and assign it to an endpoint (enrolled edge devices or virtual devices in the cloud). The Viso Solution manager will automatically deploy the computer vision application with all required modules.

4. Configure the Deployed Application

As described above, the application needs to be configured locally to set the region of interest and the counting line. This is only possible after the application itself has been deployed. To configure the application, access the endpoint in Viso Suite (Deployment -> Devices), and select the option “Local Configuration.” You will now see the deployed modules, which can be configured locally.

Select the “Region of Interest” module, and draw the area with the counting line that needs to be crossed by tracked objects to be counted. Click “Save” to confirm the local configuration.

5. Previewing the Results

Because our application contains a Video Output module, we can view the output stream to debug the application. It’s important to note that the application would not need the output view to run; its only purpose is to visualize the processed output for human interpretation.

As we set the output target “localhost,” we can view the output video in our local browser. This requires that your current machine is part of the same network as the endpoint. Depending on the flow complexity and the hardware you are using, there will be a visible delay. The delay can be minimized by lowering the video input complexity (bitrate, resolution, size) and the processing mode (CPU, VPU, GPU, and combinations).

Why Viso Suite?

Flexibility and Agility

Viso Suite allows you to update and maintain the application over time easily. The platform is open and allows using every camera and cross-platform computer hardware and AI frameworks. It’s possible to switch the AI model or change the processing hardware without rewriting the entire application. This means that you will never hit a wall or end up with an inflexible application. For example, you can migrate your applications to use cutting-edge AI hardware that will allow great performance gains and increase cost-efficiency.

For example, Viso allows using the new Intel iGPU, which boosts efficiency in large-scale production deployments that ultimately results in significant economic cost savings.

Security and Encryption

Moving computer vision applications from prototype to production requires not only highly scalable infrastructure but also enterprise-grade security and encryption. As the only end-to-end computer vision application platform, Viso Suite integrates every step in one place, making it possible to secure raw data at rest and in transit and manage user access with a sophisticated permission system.

This eliminates the challenge of integrating separate tools and platforms yourself. Read more about the built-in enterprise-grade security capabilities of Viso Suite.

On-device Edge Computer Vision

Computer vision often involves enormous amounts of sensitive information in data sets. Hence, it is usually not possible to stream the videos into the cloud for several reasons: 1) Performance and delay, 2) Privacy, 3) Intellectual Property and confidentiality, and 4) Legal reasons. This is where Edge AI, the new megatrend in computing, moves machine learning from the cloud to the edge. Edge computing allows performing machine learning on-device to build mission-critical and large-scale distributed computer vision systems.

Therefore, we deploy the application to a physical device and process the data in real time. And we only send processed insights and metrics back to the cloud. The built-in Edge AI capabilities of Viso Suite enable privacy-compliant computer vision applications. Read more about Edge Intelligence for computer vision.

What’s Next With AI Traffic Analytics?

At viso.ai, we partner with Intel and OpenVINO to help leading organizations power their cutting-edge vision AI applications. To get started with Viso Suite for your organization, request a personal demo. We help you set up your workspace, and our team of AI experts can provide you with all the computer vision services and solution consulting you need for data-informed decision-making.

With Viso, you get next-gen computer vision infrastructure to build, manage, and scale your entire computer vision application portfolio. Check out more deep learning tutorials with Viso Suite.

To learn more about implementing computer vision for applications across industries, find our other blogs:

- 100 of the Top Computer Vision Applications

- A Straightforward Mask Detection Tutorial

- The Best Lightweight Computer Vision Models

- An Overview of AI for Security and Surveillance

- Data Collection for Computer Vision

- Monitoring Network Performance with Viso Suite